As a technologist, I find a certain amount of joy comes from learning about what’s new in the market. This could be as simple as a new code or hardware release for a solution that has been around for a while, driving me to absorb the new features. Or, on the other end of the spectrum, it could be an entirely new way of thinking about how to tackle a challenge within the data center by learning from startups who are looking to shake things up with an interesting idea. A common pain point is figuring out exactly what it is that the young company does, as often the technical bits get blended in with a bunch of marketing jargon and buzzwords (see my buzzword bingo parody video) that dilute the transfer of information.

In this series, I’m going to get a little nerdy about a relatively new term that is hitting the market, Converged Data Management, and dive into the five important properties that build into this solution. The goal is to provide a clear view into each feature with real meat on the bone, along with why and how the solution is important in today’s modern data center, in a blog series. To begin, I’ll showcase everything to makes up Converged Data Management:

The first property of Converged Data Management that I’m going to cover is Software Converged, which focuses on building out a solution using a single software fabric for all data management functions, including storage. It stands in contrast with historical methods in which data protection software was abstracted from the storage platform and required a complex amount of components to deliver end-to-end data protection. If you’ve been around the enterprise data center for a while, you’ll likely recognize this need to swing between collapsed and decoupled infrastructure has been a cycle presenting itself every few decades as both architecture and technology races forward.

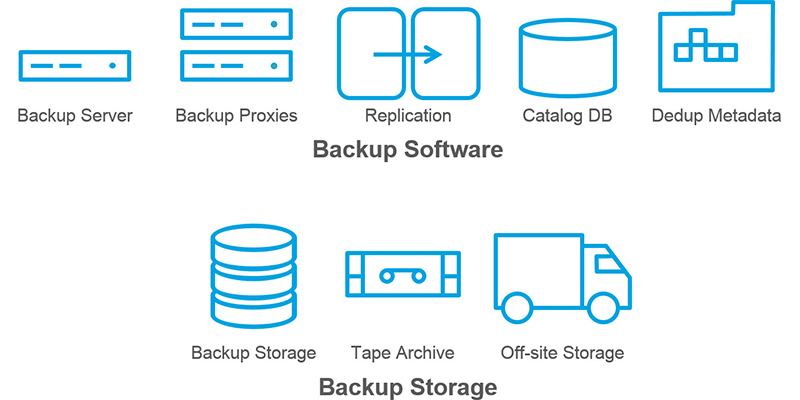

Below is a sample of the status quo architecture which showcases the common bits required to construct an enterprise data protection architecture. It includes proxy servers, master servers, and search servers to ingest data, along with any number of disk-based backup arrays, tape libraries, and off-site archives. The number of backup servers are not represented here, as that number is highly dependent on a number of factors, such as the amount of workloads being protected and which SLAs have been decreed by the business and accepted (if not grudgingly) by IT.

This has a number of architectural challenges that must be tackled by the folks who work within the IT organization. Primarily, any design of significant scale requires a fair bit of guesswork, such as determining the amount of proxy servers required to ingest all of the data being created by the server workloads and correctly associate workloads to proxy servers. There are calculators and spreadsheets to make this guesswork a bit more accurate, but nothing definitive and concrete. A secondary concern is removing single points of failure by bubble wrapping each piece of the stack with failover clustering, load balancers, and other techniques. If you’re wincing, you’ve been through this pain before – making a fragile application highly available is a significant amount of effort in the majority of instances.

Beyond these design concerns, however, is the desire to trim down the Recovery Time Objective (RTO) fat. Although the legacy software stack is aware of data held within a tape backup via its metadata catalog, there’s no way to access the data on a tape without going through a retrieval process. As Mr. Spock would say, “That is highly illogical, Captain.” Experience has shown me just how painful this process is when searching for data requested via a helpdesk ticket – “it was on some server at some time and I don’t know much else” – or when trying to comply with audit requests.

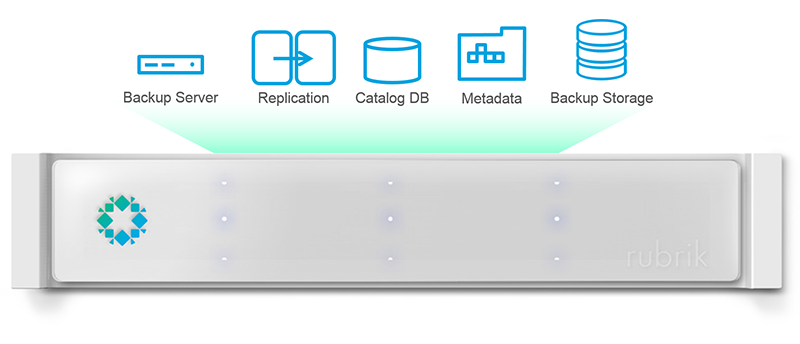

Embracing Converged Data Management abstracts the vast majority of this architecture away. There’s no backup software, proxy servers, search servers, or tape archives to deal with because the reason for their existence has been made largely irrelevant. As Don Draper said, “If you don’t like what is being said, then change the conversation.” The same goes for architecture, and in this case the entire model is drastically simplified by leveraging advancements in technology and global resources that are easy to consume. All of the intelligence, scalability, and efficiencies are provided by blending the roles of backup software and backup storage into a single platform – thus, software convergence is achieved and additional features are provided by way of iterations upon code. As an added bonus, there’s also no need for proprietary hardware to support the platform; commodity x86 tin shines as resilient, high performance, and scalable through the work done within the software stack.

Just seeing the complexity decrease is enough to tickle my noodle. It clears the deck for administrators and engineers to focus on building value into their organization. How about spending time developing SLA policies that meet customer or business unit requirements, or integrating Converged Data Management into an orchestrated workflow for a service catalog? That sounds way more valuable to me than building yet another proxy server to ingest data from a pod of compute nodes, and also alludes to the feature I’ll post details on next – Infinite Scalability. Until then, I would suggest reading Arvind Jain’s post describing all of the engineering magic in Rubrik, or go deeper into our scale-out file system in Adam Gee’s post on Atlas. And if you happen to be attending VMworld 2015, catch the upcoming VMworld session STO6287 entitled Instant Application Recovery and DevOps Infrastructure for VMware Environments – A Technical Deep Dive featuring Rubrik’s Arvind Nithrakashyap, CTO, and myself at both the US and EMEA conference.