Customers using Microsoft SQL Server tend to grow horizontally in terms of the number of databases. For recent versions of SQL Server, the max number of databases you can have on a single instance is 32,767 and it is common to see customers push that limit. At Rubrik, we have run into several customers who approach 10,000 databases on a single host. As SQL Server accelerates the push into the enterprise segment, one needs to address the problem of managing and protecting these servers at scale. The biggest problem at this scale is protection. Are we able to perform daily backups of 10,000 databases on a single Windows server?

We put 4,000 small SQL Server databases on a single host and decided to back them up one by one using the standard Microsoft APIs and SQL Server writer to see where there are opportunities to optimize. It took approximately one month to finish the backup process. Following this, we implemented batching where we backed up a batch of databases in one shot. We varied the batch size all the way to 200 but were not able to finish the backup in less than a day.

Analysis

We decided to dig deeper into the issues and discovered three areas where we could optimize:

The first task in backing up a large number of databases is to tabulate the metadata of all the databases in the system. If there are many databases on the host, the current method takes more time presumably because it gathers metadata about all the databases on the host, even those that we do not take a backup of. In the experiment above, we noticed that this task could take more than an hour.

The amount of time it takes to tabulate the metadata of all the databases could be ameliorated if we could back up a large number of databases in one shot and not gather the metadata multiple times. However, we could not increase the batch size beyond 200 databases. For a 10,000 database backup, we would need to gather the metadata 50 times, each of which would take an hour to complete. This would negate the goal of taking daily backups.

Finally, any mechanism that copies data from a Windows host to a backup target has to scale with a large number of files. A host with 10,000 databases is going to have at least 20,000 files and in such a situation, the copy mechanism should scale with a large number of files on a host.

Solution

How did we tackle the challenge above? We introduced two innovations in our SQL Server backup solution.

Scalable Snapshot Service: We came up with a new database snapshot mechanism that gathers metadata from and freezes only the databases to be backed up, takes a snapshot of the volumes the databases are resident in, thaws these databases, and performs post-snapshot accounting. This mechanism is not only very fast but also optimizes the number of threads and the amount of memory used and allows us to use a large batch size of 400.

Parallel Copy Writer: As our analysis indicated that there was significant overhead associated with each individual file fetch per database. So, we came up with a new parallel copywriter to amortize the overhead with a single group fetch function that can fetch a large number of files with a single RPC. This allows for a more efficient mechanism to transfer files from the Windows host to a backup target and maximize bandwidth utilization for a large number of small files scenarios.

Results

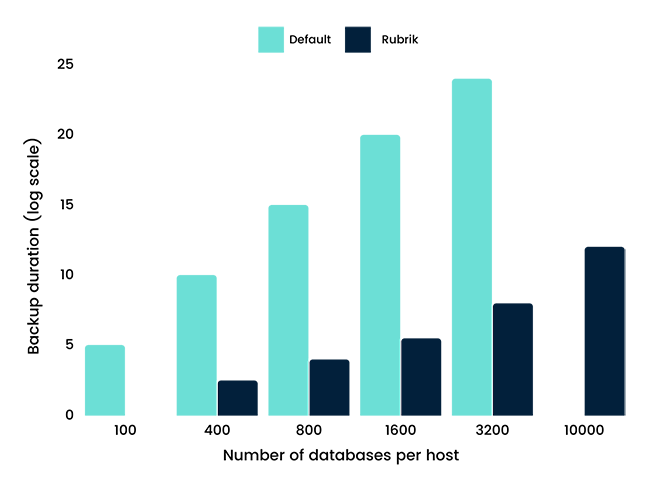

To demonstrate the effectiveness of our solution, we measured the time to backup 100 to 10,000 test databases each averaging less than 1 GB in size on a 4-core 128 GB memory Windows host using both the default Microsoft infrastructure as well as the new Rubrik solution. The results were overwhelmingly positive and the Rubrik solution was about a couple of orders of magnitude faster than the default infrastructure as we approached 10,000 databases on a single host. Last but not the least, it took us less than 6 hours to backup 10,000 databases using the Rubrik solution.

As the leader in reliable and scalable database backup, Rubrik continues to enhance platform security to keep data protected so you can successfully recover from any catastrophe from logical errors to something as insidious as ransomware attacks. We are always striving to improve our scale, protecting a larger number of databases with a smaller cluster size. In subsequent articles, we hope to show you more of our database backup prowess.