Making any sufficiently complex system look and feel simple is a tall order. But that’s precisely what Cerebro does for Rubrik Cloud Data Management! As the “brains” of the stack, Cerebro acts as the autonomous conductor standing on a podium before thousands of critical systems, all eager to be protected or restored as part of the data lifecycle management symphony. Founding Engineer Fabiano Botelho introduced Cerebro in a blog post over two years ago. The time is nigh to dig deeper.

The power of Cerebro allows workload data to be freed from the storage tier by unlocking mobility beyond the data center — into the cloud and between different clouds. Cerebro is the system’s brains, accommodating many critical functions of the Rubrik CDM stack. Two of those functions are the Distributed Task Framework and Blob Engine, which together unite as powerful components to ensure Rubrik delivers data that is immediately accessible and recoverable.

Distributed Task Framework

The Distributed Task Framework is the engine responsible for globally assigning and executing tasks across a cluster in a fault tolerant and efficient manner. It has the intelligence to provide resource utilization and load balancing of all data in a declarative manner.

Rubrik’s Distributed Task Framework applies some intelligent algorithms to load balance and optimize resource utilization through two methods:

- Task Scheduling

- Task Maintenance

Task Scheduling ensures that all tasks are evenly distributed across the cluster, while Task Maintenance enforces the activities to uphold the assigned Service Level Agreement (SLA) policies on a daily and long-term basis. Once an SLA policy is set, Task Maintenance optimizes underlying processes to meet these set goals for data retention, replication, and archival.

Blob Engine (Data Lifecycle Management)

Data lifecycle refers to the events that data goes through during its lifetime. A backup is initially ingested into Rubrik after the object to be backed up is assigned an SLA or an on-demand snapshot is triggered by the user or API.

An SLA controls four key functions of the CDM platform:

- Backup retention

- Backup frequency

- Archival retention

- Replication retention

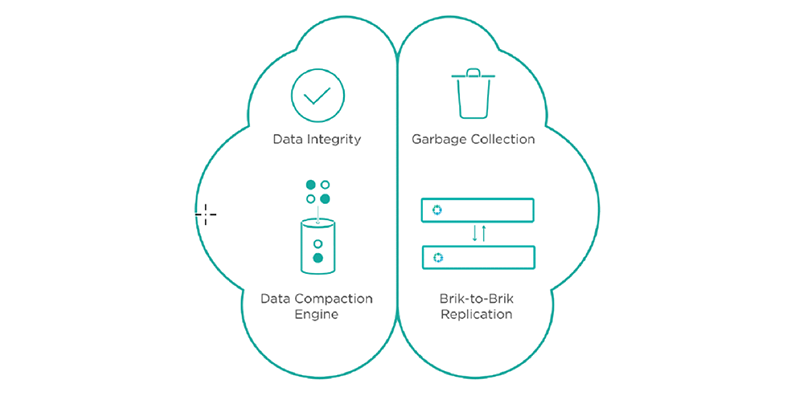

The Blob Engine is at the heart of Rubrik’s data lifecycle management — from initial ingest to expiration — globally, regardless of location. During this entire process, an SLA verification job runs and verifies SLA compliance. After the retention period is met for the snapshot, mark the snapshot data as expired and available for garbage collection. The entire process is seamless and provides a mechanism of trust and compliance. The Blob Engine ensures high efficiency, guaranteeing that data is instantly accessible and recoverable at all times while also enabling the orchestration of data to any destination. It maintains the content in a generic form (independent of the data source) until deletion.

Simply put, all data gets sent to it, and the Blob Engine determines how to efficiently manage this data by creating a data control plane. The Blob Engine provides core data management services, including immutability, data reduction, retention, replication, and archival. To meet today’s instant access requirements, the Blob Engine has been designed to deliver instant recovery. The system actively seeks to minimize fragmentation within the latest snapshot, based on quality of service prioritization (SLAs), to achieve near-zero recovery times.

Fin

Many data lifecycle challenges are solved by Cerebro by efficiently ingesting data at a cluster-level, compactly storing data while making it readily accessible for instant recovery, and ensuring data integrity at all times. This functionality is what allows Rubrik to repurpose backup data for other use cases, such as application development and testing.

To learn more, please download the Intelligent Data Protection with Rubrik technical white paper.