Fabiano Botelho, father of two and star soccer player, explains how Cerebro was designed. Previously, Fabiano was the tech lead of Data Domain’s Garbage Collection team.

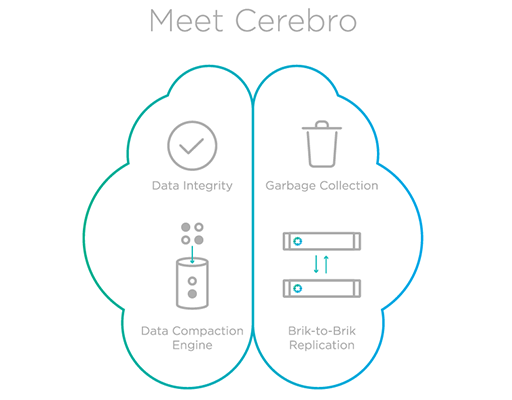

Rubrik is a scale-out data management platform that enables users to protect their primary infrastructure. Cerebro is the “brains” of the system, coordinating the movement of customer data from initial ingest and propagating that data to other data locations, such as cloud storage and remote clusters (for replication). It is also where the data compaction engine (deduplication, compression) sits. In this post, we’ll discuss how Cerebro efficiently stores data with global deduplication and compression while making Instant Recovery & Mount possible.

Cerebro ties our API integration layer, which has adapters to extract data from various data sources (e.g., VMware, Microsoft, Oracle), to our different storage layers (Atlas and cloud providers like Amazon and Google). It achieves this by leveraging a distributed task framework and a distributed metadata system. See AJ’s post on the key components of our system.

Cerebro solves many challenges while managing the data lifecycle, such as efficiently ingesting data at a cluster-level, storing data compactly while making it readily accessible for instant recovery, and ensuring data integrity at all times. This is what allows us to re-purpose backup data for other use cases such as application development and testing.

Store Less, Read Fast

Deduplication often fragments your data layout, making it incredibly difficult to discover the optimal layout. That’s why compactly storing versioned data that is also readily accessible is a challenge. To solve this, we use similarity hashing to efficiently route data to where other similar data lives, and then apply deduplication locally.

Rubrik employs an incremental forever strategy when taking backups – only one full snapshot is taken initially and thereafter an ongoing sequence of incremental backups are taken. This approach significantly shortens the backup window and efficiently reduces the amount of data that hits system resources (network and storage).

One major challenge found with other backup systems is that data stored the way it is ingested prioritizes performance for more recent data. This method optimizes the data layout for recent data while sacrificing performance on older data. Rubrik circumvents this issue by applying version management in the time machine – we use a combination of full snapshot with forward incremental and reverse incremental copies on a per virtual machine basis. Reverse increment is the fundamental mechanism to ensure that read performance on most recent data is prioritized.

In follow-up posts, we’ll dive into other interesting challenges faced by Cerebro and the rest of our technology stack. Stay tuned!