There’s a massive elephant in the room: unstructured data. It’s valuable, and it needs to be protected. Let’s talk about why.

What’s the Deal With Unstructured Data?

First, a level-set. According to IDC, over the next five years, 90% of the world’s data will be unstructured. What does that mean? A single enterprise might have petabytes of data living on network-attached storage (NAS) systems. That’s billions of files like emails, videos, and images that are being stored in boxes and data centers and colos all across the world. This data is disorganized, so it’s difficult to manage and analyze.

Bottom line: NAS data is huge, it could contain very sensitive information (like PII), and companies likely don’t even know exactly what they’re storing. In other words, it’s in companies’ best interests to ensure that their NAS data is safe.

The Challenges with Common Protection Strategies

Savvy organizations know they need to protect their NAS data. But how? Our customers generally have used two methods. Both are standard in the industry, and both come with significant challenges.

Replication

The first commonly used tactic is for a company to replicate its NAS data to another data center, usually somewhere else in the region or world. This works well in traditional BCDR scenarios, where we’re protecting against natural disasters, equipment failure, or other tangible catastrophes.

But, it’s not the best option to protect against cybercrime like ransomware. Why? Well, remember, NAS data is unstructured and difficult to navigate, and a company might not know all the information it has. So, in duplicating its billions of files, it could duplicate malicious code. It’s just replicating ransomware from its primary environment to its secondary environment.

NDMP

The second common practice is to use Network Data Management Protocol (NDMP). It’s the right idea – a company backs up its data so that it’s able to restore if something happens to the primary environment.

The challenge? NDMP was created in 1995. The year of Batman Forever and PS1. The year that Windows 95 and Internet Explorer debuted.

It’s old technology.

NDMP just wasn’t designed to handle the sheer volume of data that companies have today. So, in addition to bottlenecks, inefficiencies, and limitations with incremental backups (see our more in-depth blog here), it can take months – months! – to back up data. And when a company has finally completed its backup from January, it’s April and the company has generated more data.

Now, say this company is hit with ransomware. It would have to restore back to January, because that’s the last data it has completely backed up. The company has lost the months of good information that it had acquired since its last backup.

Today’s common practices can’t keep up with the scope and scale of today’s data needs.

A Better Option

So, how can a company identify sensitive information across billions of files that aren’t arranged in an easy-to-use format? How can it keep up-to-date backups of petabytes – or more – of data? How does it ensure that it’s not just replicating malicious code when it does copy its data?

Enter Rubrik NAS Cloud Direct for AWS.

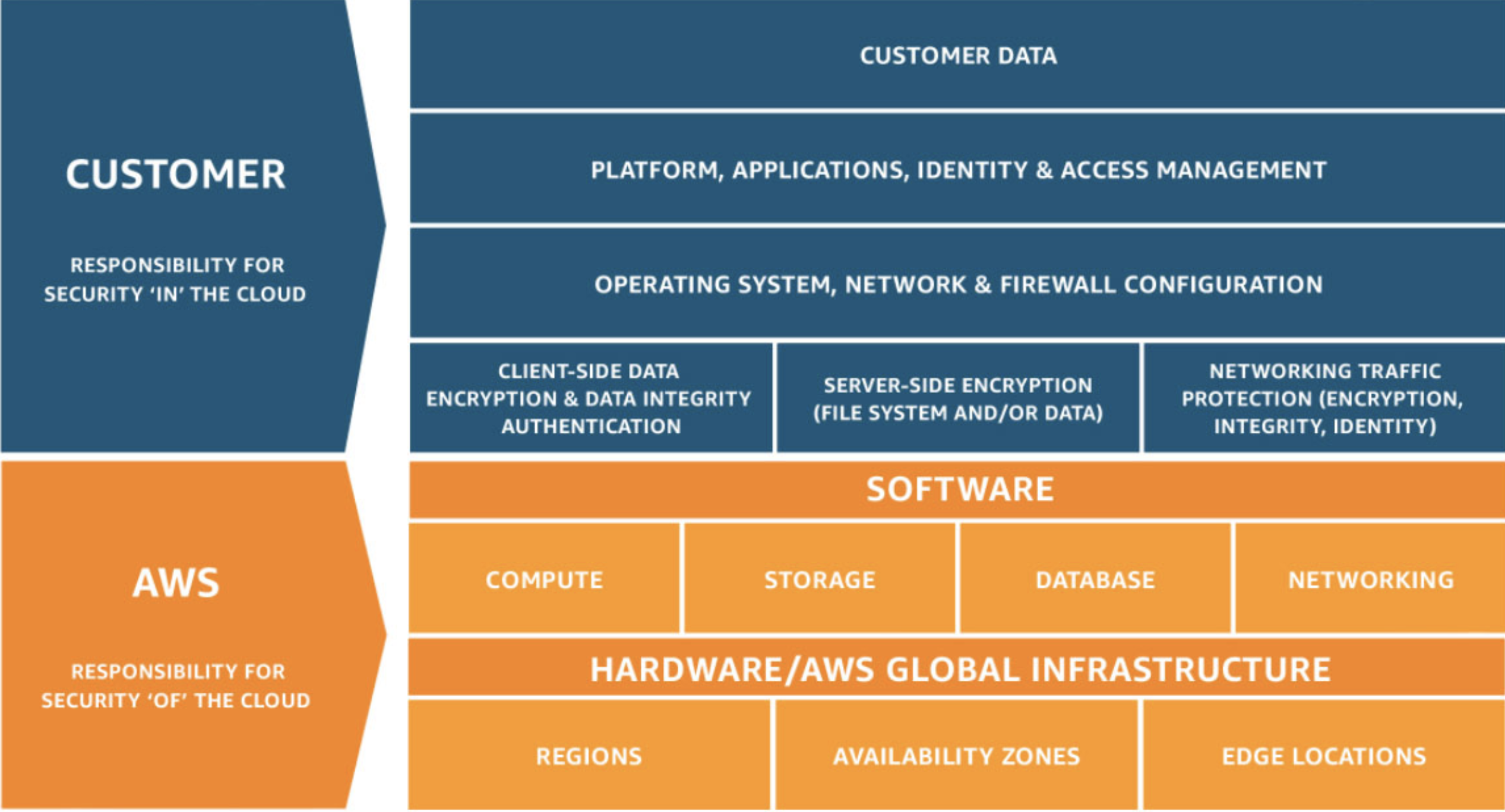

AWS already has a stellar reputation for its robust native security features. Its worldwide data centers and network are architected to provide customers security “of the cloud” via the Shared Responsibility Model. Customers are responsible for the security “in the cloud,” which is where Rubrik NAS Cloud Direct for AWS can help

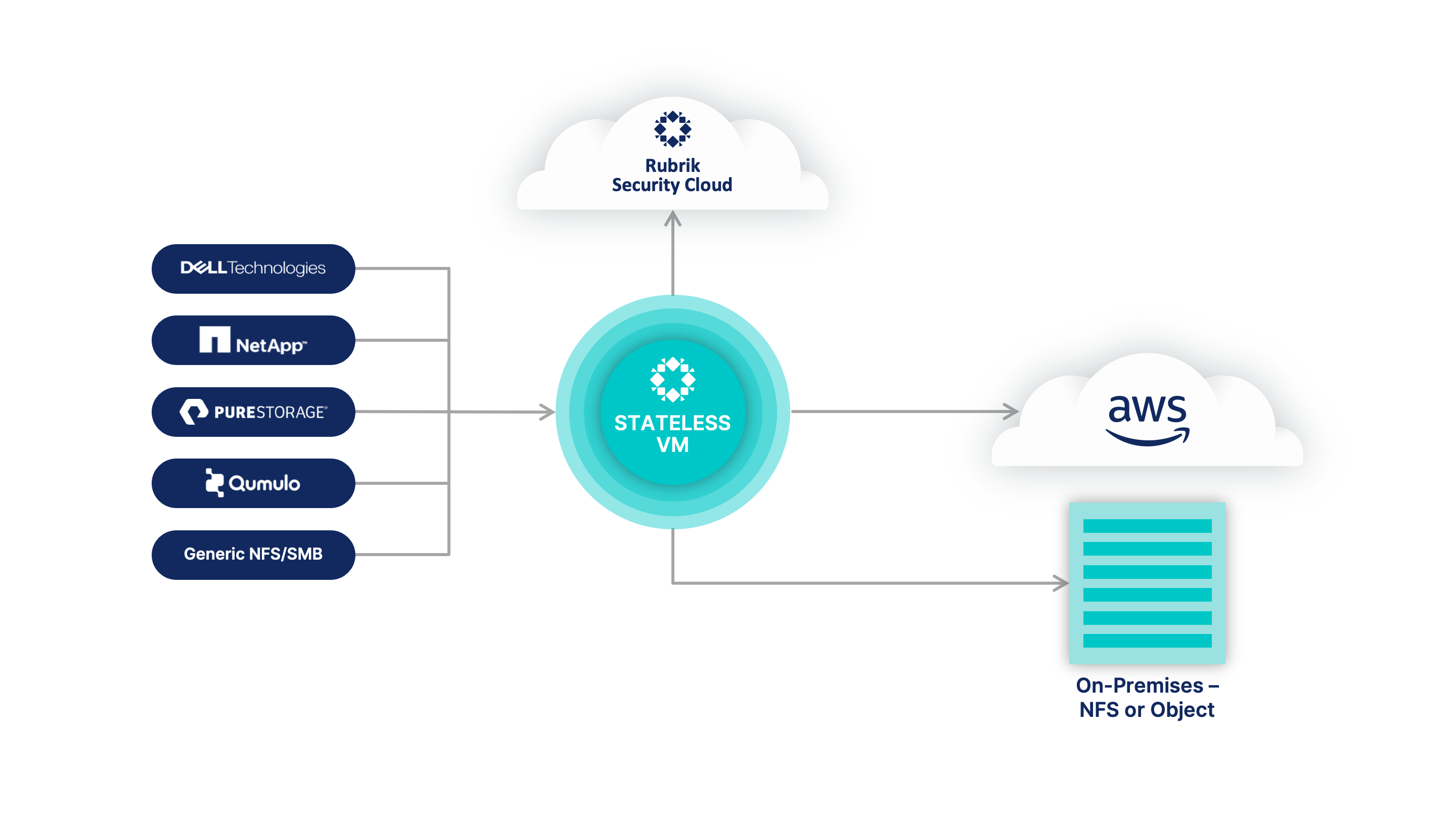

Rubrik NAS Cloud Direct is a stateless VM in our SaaS control plane that has the ability to scan billions of files. This VM can be deployed in the data center or natively in AWS, so we can scan any file workload.

What Does This Mean For You?

With Rubrik NAS Cloud Direct for AWS, you can file data with air-gapped, immutable backups and rapidly recover at scale. As we’re backing up your data, we’re scanning billions of files for ransomware, changes, or deletions of data…any rogue access across your data sets. And we’re doing it quickly.

NAS Cloud Direct offers seamless API connectivity with AWS, giving you additional advantages:

- Migrate to AWS: Whether moving petabytes of data into AWS file services or going cloud-native and moving into Amazon Simple Storage Service (Amazon S3), NAS Cloud Direct Data Flow enables rapid migration and synchronization of file data at scale. Data Flow jobs, such as backups and archives, are incremental-forever, meaning that the first snapshot is taken in full, and only incremental changes are captured after that. Snapshots may be scheduled to sync as often as hourly to better enable strict cutover timeframes.

- Protect AWS File Services: NAS Cloud Direct can protect SMB and NFSv3 data sets in Amazon FSx for NetApp ONTAP, Amazon FSx for Windows File Server, and Amazon FSx for OpenZFS. This is enabled by deploying the NAS Cloud Direct VM into Amazon Elastic Compute Cloud (Amazon EC2) and scaling out the number of EC2 instances as needed for protection at scale.

- Recover To AWS From On-Prem: Because NAS Cloud Direct creates a platform-independent copy of NAS data, it can recover the data to any of the SMB or NFSv3 file services offered in AWS.

Now, your valuable NAS is protected. You can move data with highly parallelized, latency-aware file movement and dynamic resource throttling to achieve peak performance and meet business SLAs. And, you can send NAS data directly to on-prem storage target or the least expensive cold tiers of AWS storage with true incremental forever backups.

What about cyberthreats? Well, you’ll have immutable snapshots of your NAS file data that cannot be modified. You can use global predictive search to find exactly what you are looking for, with granularity down to the file level. And, you can recover anywhere – on-prem or in AWS, to the original source or to an alternate target.

It’s time to let the 1990s go and get your NAS data – all of your NAS data – protected with Rubrik NAS Cloud Direct for AWS.

For more information, please visit us on the AWS marketplace here.