In our previous blog, we discussed the importance of product quality, different types of testing we rely on at Rubrik, and how automated testing plays a pivotal role in ensuring quality of our products. Relying heavily on Unit, Component and Integration testing is important. But there will be code paths which we may not be able to cover using these types of tests.

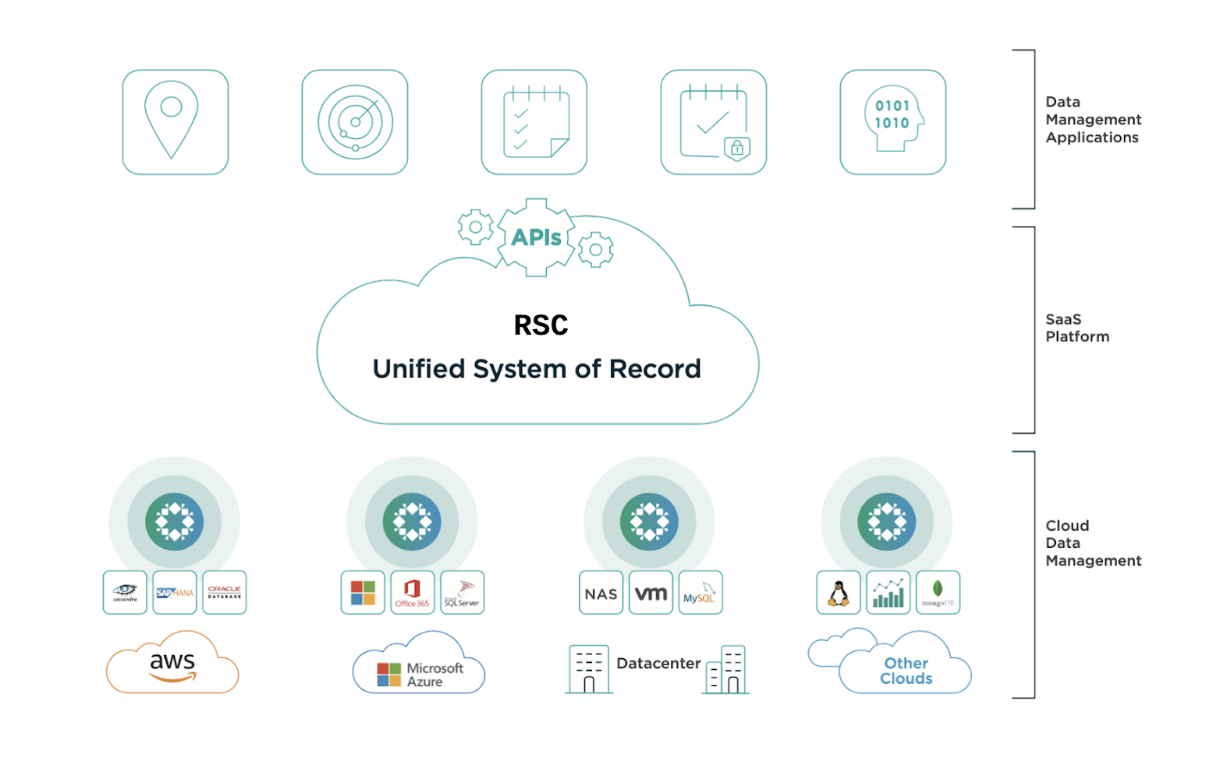

In the picture below, we can see a high-level view of our solution. Our SaaS platform, Rubrik Security Cloud(RSC), acts as a control-plane for our customers to protect their workloads. The data management happens in the Cloud Data Management(CDM) systems linked to our RSC.

In this blog, let’s dive deeper into one key aspect of automated testing - E2E testing, which ensures our solutions function seamlessly from start to finish resulting in products that meet our customer expectations. Before we go any further, let’s first understand the challenges we have.

Challenges

While End-to-End testing is powerful and comprehensive, it poses a unique set of challenges - especially for Rubrik, given the nature of the products.

One primary reason E2E testing can be expensive and time consuming to manage is because of the multiple dependencies and the potential for issues deep in the technological stack. However, the challenges are particularly distinctive at Rubrik, mainly because our products deal with data fundamentally - encompassing tasks like data storage, inventory management, and making these available in a timely manner plays a big role.

Unlike the scenario in typical SaaS products, E2E testing is unique and an extensive operation at Rubrik as there are several resources required to run our tests. These resources are Google Cloud Platform(GCP) deployments, CDM clusters, virtual machines, etc and they are expensive.

Adding to that, some of the other challenges we’ve faced at Rubrik were,

Infrastructure instability

Longer duration tests, like E2E tests are more prone to face issues due to the inherent infrastructure instability. This infrastructure instability can stem from network flakiness, hardware failures, resource unavailability, etc.

Low level component breakages

Breakages in low-level components can adversely impact multiple dependent components, creating a domino effect that compounds the issue.

Common test library breakages

Each component maintains its own libraries to validate its features, and there are shared common libraries that are used by multiple components during E2E testing. Therefore, any breakages in these shared libraries affects tests across multiple components.

And let’s not forget another challenge distinctly associated with Rubrik - data integrity. Given the nature of our work, we understand the importance of data integrity and have implemented stringent measures to prevent data corruption. Our robust and sophisticated data integrity checks within the product and during testing ensure the utmost protection and reliability of our products.

Irrespective of the challenges we face, the success of our E2E testing strategy is attributed to our excellent engineers with their robust problem-solving skills, coupled with our relentless commitment to uncover potential issues.

End-To-End Testing

As part of our E2E test strategy, we cover both functional and non-functional aspects of our products RSC and CDM. We employ a variety of E2E tests ensuring a top-notch quality and customer satisfaction. The following sections will describe different types of E2E testing we employed by taking one of our products into perspective. These different types of testing are applied across all the products we build and the ones described below are some of the important ones we’d like to share with you.

UI Testing

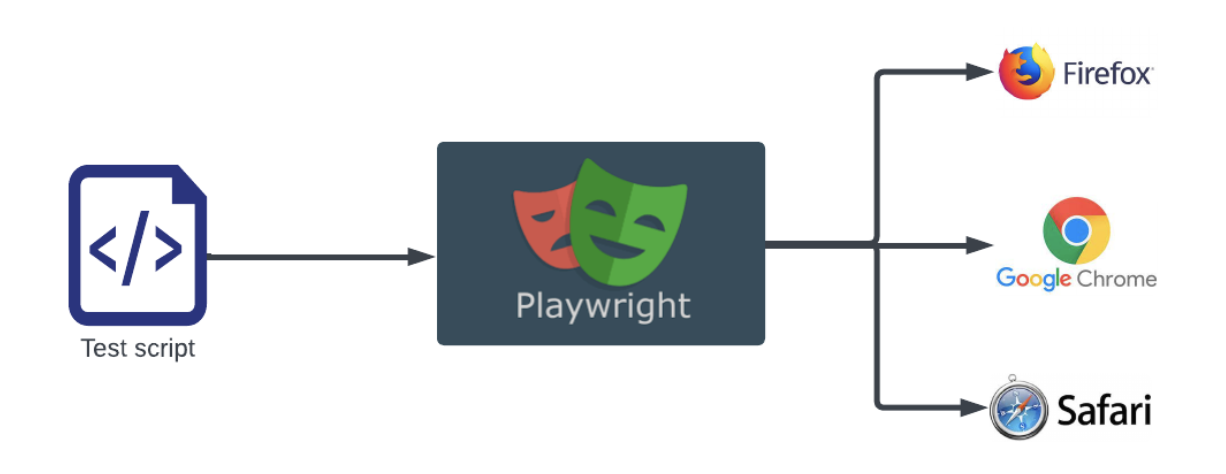

A cornerstone of our testing strategy at Rubrik is our UI testing. The goal is to ensure a first-class product without relying on an exhaustive manual qualification process. We use playwright, a tool for browser automation, to control and test the user interface of RSC within our E2E tests.

Playwright allows us to simulate a user’s interaction with the browser for specific workflows and verify that the UI responds as expected. While the UI plays a crucial role, it is still a relatively small part of the entire E2E testing process. Generally, our E2E tests are designed to make direct API calls. However, with UI testing, these calls are made via the UI. This inclusion of UI in our E2E tests instills confidence in the functionality of the critical customer workflows. It guarantees that they operate as intended end-to-end from the user interface right through to the database.

Performance Testing

Our ultimate objective is to execute customer data operations like backup, restore, etc. promptly and stably. To get a measure of our products’ performance, we rely on two techniques:

Peacetime benchmarking

Saturation benchmarking

Peacetime Benchmarking

We use this technique to understand the performance of a single operation like backup of a single VM with all system resources available for it. While there are other services and internal background operations running within our system, those are all necessary for it to perform as expected.

To give you a better insight, an operation can be either backup or export or mount of a snapshot of a single VMware VM. Even though the system is capable of taking backups of multiple VMware VMs at once, we just do one operation to understand the maximum performance of the system for that particular operation. Is this enough to understand the performance of the system? Obviously not. Our customers run many workloads concurrently and this is where saturation benchmarking will help us.

Saturation Benchmarking

We use this technique to understand the optimal performance of the system by saturating one or more of the system resources like Input/Output(I/O), CPU, memory and/or the network. What resource(s) gets saturated purely depends on the platform under test though.

To understand the saturation point of the system, we start by kicking off a smaller number of operations and gradually increase the number of operations until we no longer see improvement in the aggregate throughput or degradation in the time taken to complete the operations.

For example, we start by taking backups of 4 different VMware VMs and gradually increase them all the way to 20 while keeping a check on the aggregate throughput.

The above is just one example of what we do. We do extensive performance testing on all the workloads Rubrik supports.

Security Testing

As a Data Security company we build products modeled after Zero-Trust Data Security architecture. Keeping the product secure is of paramount importance considering the data our customer systems hold. Our exhaustive security practices effectively safeguard our products against all known vulnerabilities, fending off threats by employing a powerful offensive security program.

The Process

Our vulnerability management process spans multiple important steps:

Identification of Vulnerabilities: We use industry-standard tools for scanning and detecting security flaws in our public cloud and Colocation assets. Regular internal and external scans help us identify anomalies promptly.

Triage: All potential vulnerabilities are immediately assessed for their impact. They are classified according to severity and assigned to the appropriate team for resolution.

Reporting: Detailed reports for all identified vulnerabilities are pushed to ticketing boards, informing the respective team about the issues and the necessary steps for rectification. We track the reported vulnerabilities and remediation status, publishing regular reports for business and product leaders.

Remediation: This stage involves follow-ups on the remediation efforts based on the set SLA timeline, and rescanning for critical vulnerabilities to ensure their resolution.

The application of patches is handled meticulously to safeguard the production environment. While we're unable to promise SLAs around application of patches, we ensure minimization of any discernible downtime for Rubrik Security Cloud SaaS.

Penetration Testing

Our penetration testing adheres to the Open Web Application Security Project(OWASP) framework. Done by independent, third-party security experts, we perform these tests prior to deployment to detect potential security vulnerabilities. Regular penetration testing takes place, including for our support tunnel/teleport feature and underlying product infrastructure.

Stress Testing

Our target with Stress Testing is to stress all the layers of our product stack. In the case of CDM, we stress the system with mixed workloads like VMware, MSSQL, Oracle, etc. The backup, recovery, replication and archival paths are tested thoroughly for all the workloads and all these operations are run in parallel with the defined aggressive service level-agreements(SLAs). We also cover periodic orchestrated disaster recovery(DR) tests.

While all the workloads are running on our in-house systems, we make sure these go through the Upgrade process as well just like our customer setups go through. This is one of the points in our release cycle where we uncover a lot of systemic and stability related issues. We also uncover any resource(memory, space and disk) consumption related issues as part of this testing process. Typically, CDM clusters used for this purpose have either 4 or 8 nodes.

Scale Testing

With Scale testing, our aim is to scale up and scale out the system in case of CDM. We do scale up the total number of objects by 10 to 15% more than what our customer setups have in the field to push our system boundaries beyond normal operations. We test these cases by scaling out our systems as well, i.e, by adding more nodes to the CDM clusters. We don’t tend to run the operations as aggressively as we do in Stress testing but we do cover the mixed workloads by running backup and recovery operations in parallel as part of Scale testing as well. We also make sure that the UI holds good with such loads apart from other things that we try to uncover as described above in Stress Testing. Typically, clusters used for this purpose might have anywhere from 80 to 100 nodes.

Longevity Testing

To uncover any age related or stability issues in case of CDM, we rely on stress and scale clusters. These clusters are maintained to run over multiple releases with the evolving feature set and/or workloads. We also test the archival and replication services thoroughly. For archival testing, we do tests with the real cloud provider services like Azure, AWS, etc that Rubrik supports. Good thing here is that these clusters uncover a lot of issues so the customer setups are safe and secure from running into these issues and our excellent engineering team helps bring our internal clusters back online by adding hotfixes whenever we run into any issues.

On top of the workloads that continuously run for several months and releases, we do run systemic regression and resiliency pipelines to test some of our customer workloads, especially the larger configs where we test the backup and recovery of a multi TB VM or a DB along with some fault injection.

Upgrade Testing

Upgrade is a crucial first step our customer systems must undertake to avail any of our latest features or enhancements. We understand if an upgrade doesn’t work especially when the entire system is going through it, we are doomed. Today, our CDM product offers rolling upgrades. This allows us to upgrade one node at a time and keeps the rest of the nodes in the operational state. This is mainly to not have any downtime for customer setups while the upgrades are happening.

As part of automated E2E Functional tests, we have coverage for upgrade success. At certain stages within the upgrade, if we run into a failure, we expect an auto rollback. This behavior is tested using our upgrade rollback tests. We also make sure that the previously failed upgrades can be resumed and completed successfully as part of our Upgrade Testing. Both rollback and resume are tested by injecting faults at different stages within the upgrade cycle. While we have some functionality tests covering the above cases with minimal workloads running, we do make sure that upgrade runs successfully when multiple workloads are running on our systems using our Multi Feature Upgrade tests. Also, as mentioned above, our in-house clusters used for Stress, Scale and Longevity testing goes through upgrades which help us in catching almost all the issues with Upgrades in-house.

Conclusion

In conclusion, E2E tests play a critical role in our automated testing structure, helping to identify glitches that might affect user workflow. By emulating real user behavior, we expose and correct issues, ensuring our solution delivers a seamless and efficient user experience.

In the next segment of the Product Quality at Rubrik blog series, "Automated Testing: Iterate and Deliver faster", we will dive into how we relieved our developers from some of the mundane tasks. Keep an eye out for insight on accelerating the software development lifecycle by making the most of automation capabilities.

blogpost | 11 min read | Sep 12, 2023

Product Quality at Rubrik - Part 1

At Rubrik, we consider product quality a top priority. In this blog, we will talk about the automated test strategy we follow at Rubrik to ensure the best quality products for our customers.

blogpost | 17 min read | Aug 9, 2024

Product Quality at Rubrik - Part 3

Delve into how Rubrik’s Testing Process and Infrastructure enabled our Engineering community with faster iteration and efficient delivery of our Products.