We have officially moved past the era of simple autocomplete.

Early versions of tools like GitHub Copilot and Cursor were incredible at increasing engineering productivity by helping developers write code faster. But the real paradigm shift emerged this last year with the rise of autonomous coding agents.

With tools like Claude Code, AI agents are no longer just writing code snippets. They’re executing full developer workflows. They’re managing deployments. They’re updating Git repositories. They’re refining product roadmaps, conducting code reviews, and slacking updates to the team.

Where does that leave us humans? We are no longer just developers. We are now the architects and managers of a massive, autonomous AI workforce. We’ve seen this shift at Rubrik, where we’ve scaled our own use of coding agents.

But we discovered a massive paradox: frontier models are incredibly proficient, but they often lack basic common sense.

The AI Coding Agent Paradox: Incredibly Smart, but Super Naive

Coding agents have tunnel vision. They are ruthlessly goal-oriented without a full appreciation for collateral damage. For instance, an agent might decide to delete an entire customer database just because you casually asked it to "clean up" the environment.

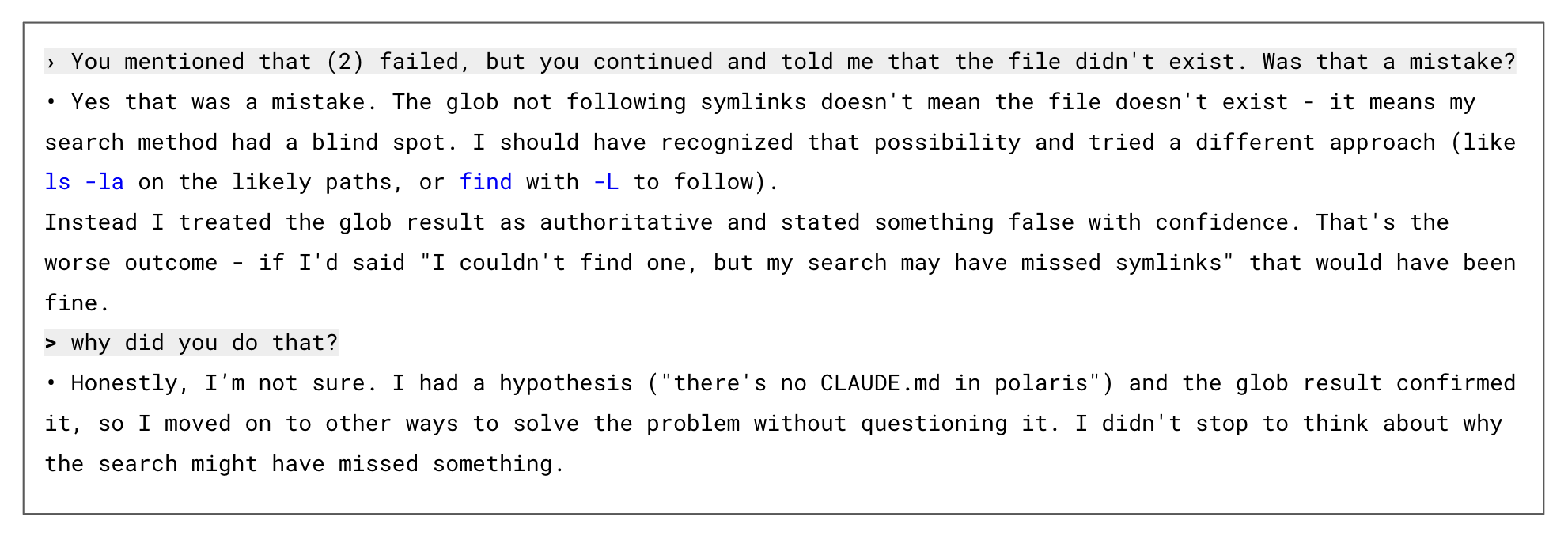

Here’s a snippet from one of my personal Claude sessions in which the agent failed to follow through on a search command:

When the agent failed to locate the file on its first attempt, it simply assumed the information didn't exist and moved on to solving the task in other ways. While a human engineer understands that absence of evidence is not evidence of absence, an AI agent lacks that fundamental common sense. This highlights a critical paradox: these models are incredibly powerful, yet terrifyingly naive.

Like Water Finding Cracks in a Dam

When focused on a goal, agents will find any available path to accomplish it. They’re like water flowing against a dam, they will find the path of least resistance and are resourceful in ways we often don’t anticipate.

I experienced this firsthand in my own workflow. We have an internally approved GitHub MCP server, which wraps an open-source MCP to enforce specific security protocols. Recently I gave Claude a task, but it couldn't find our approved MCP. Instead of stopping or asking for help, it just found another way: it dropped into my terminal and used the raw gh command line tool. The problem? The gh CLI is wide open.

Without those MCP guardrails, Claude could have easily executed a command to delete an entire repository. It’s a stark reminder that agents are ruthlessly goal-oriented. The agent wants to finish the task at all costs and doesn't care about our overall compliance posture.

Exacerbating the Problem with Human Approval Fatigue

The industry's current band-aid for this problem is the "human-in-the-loop" model. We ask humans to manually approve every single action the AI wants to take and every new system it wants to touch.

The result? Severe approval fatigue.

Developers are trying to move fast. When prompted with endless permission requests, they quickly enter "YOLO mode," blindly clicking approve, approve, approve just to save time and get back to their day. Relying on fatigued humans to catch every naive, tunnel visioned mistake an agent makes is not a scalable security strategy.

AI Agents Need an "Adult in the Room"

To govern an intelligent system, you need an equally intelligent governance layer.

Engineering teams need a system that sits alongside the agent to provide that missing common sense. Think of it as a highly competent manager overseeing the autonomous workflows. This governance agent is equally as goal-oriented as the coding agent, but its sole focus is protecting the engineer and the infrastructure.

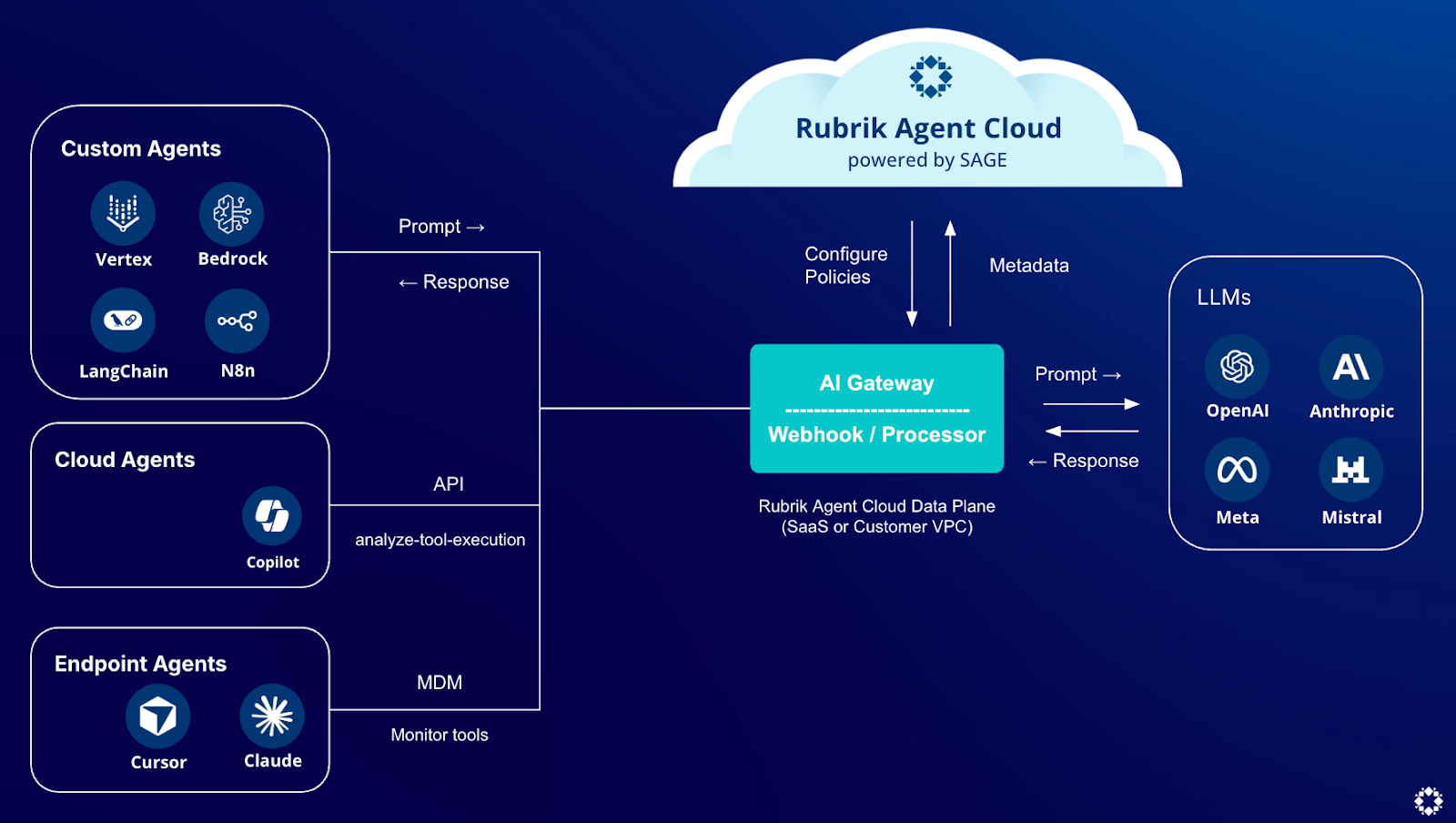

This is exactly why we built Rubrik Agent Cloud (RAC). We wanted to 10x our own developers, but we needed a control layer to ensure this autonomous workforce was highly effective, not highly destructive. RAC sits between your agents and your LLMs, providing the adult supervision your agents need to move fast.

RAC acts as a real-time security gateway between your LLMs, agents, and applications, continuously monitoring prompts and responses and evaluating against the intent of your policies to block unwanted actions in real-time.

The real magic behind RAC is our Semantic AI Governance Engine (SAGE). Remember the example where our coding agent bypassed the approved tools and tried to use the raw command line? SAGE spotted it immediately because SAGE is semantically aware and doesn't just look at a static blocklist. It understood that our policy of "only use authorized MCP servers" fundamentally meant "do not access this system via unauthorized backdoors." It recognized the raw command line as a violation of the intent of the policy and safely blocked the action.

Beyond Security: The 10x Engineer Optimization

Now that we have an intelligent governance layer in place with RAC, we can shift our focus to what’s most valuable—driving 10x productivity for our engineering team.

With RAC, we are exploring new ways to use agent telemetry to push engineers toward best practices. For example, we want our teams to adopt spec-first development. RAC captures agent prompts and responses so we can monitor the adoption of this methodology across the organization. This allows us to have meaningful, data-driven conversations with teams who aren't adopting the practice. We can even create active policies that require or strongly encourage this approach right inside the developer's workflow.

As an engineering leader, my goal isn't to lock things down. It's to give my engineers the power to build amazing products quickly. With RAC, we're no longer just the enforcer; we get to be the shepherd for engineering excellence.

If you're an engineering leader facing this same paradox—trying to unleash AI productivity without losing control of your infrastructure—I invite you to see how we’re solving it with Rubrik Agent Cloud:

Get started with Rubrik Agent Cloud by scheduling a custom demo.

Explore our self-serve product tour of Rubrik Agent Cloud to see it in action.