In today’s digital marketplace, customer expectations have skyrocketed. There’s very little tolerance for transaction delays and service-level gaps, so even a small window of digital downtime can mean losses in productivity, sales, and customer loyalty. For that reason, every organization needs a robust disaster recovery (DR) plan.

A disaster recovery plan defines how – and how quickly – you’ll recover from an incident that unexpectedly renders critical apps and data inaccessible. As such, it prepares you for getting back online fast, so you can minimize damage to your business.

Key Recovery Objectives

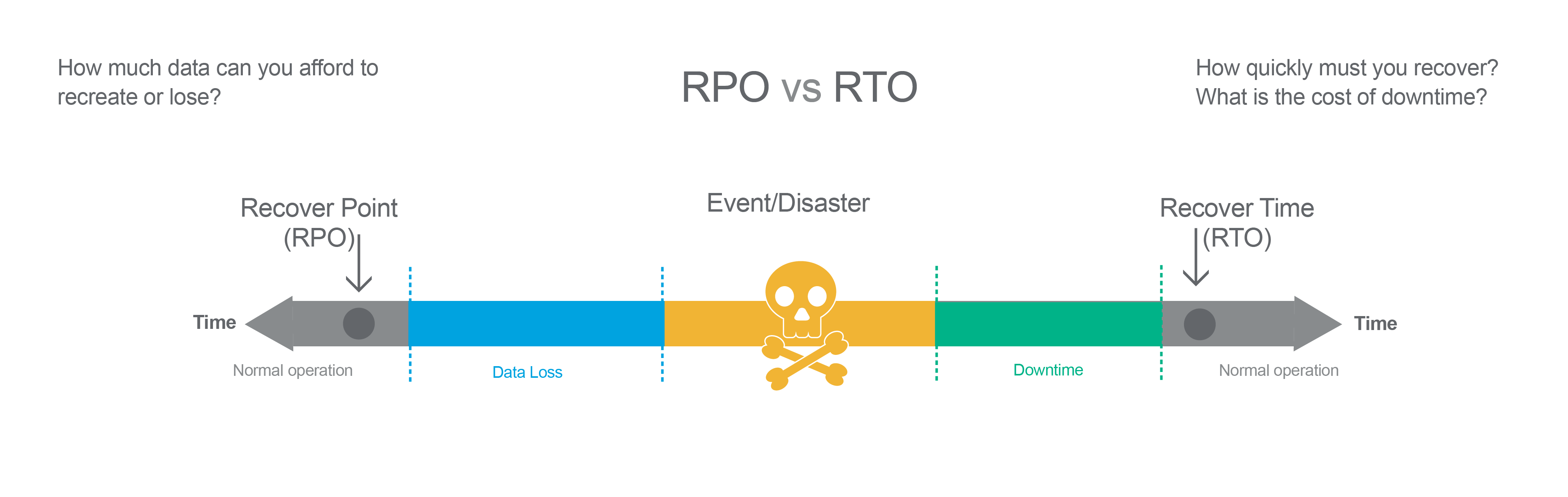

Among the components of a DR plan are two key parameters that define how long your business can afford to be offline and how much data loss it can tolerate. These are the Recovery Time Objective (RTO) and Recovery Point Objective (RPO).

RTO is the goal your organization sets for the maximum length of time it should take to restore normal operations following an outage or data loss.

RPO is your goal for the maximum amount of data the organization can tolerate losing. This parameter is measured in time: from the moment a failure occurs to your last valid data backup. For example, if you experience a failure now and your last full data backup was 24 hours ago, the RPO is 24 hours.

Pro Insights

Get Up to Speed on RPO and RTO

Matching RTO/RPOs to Apps

You will likely create different RTOs and RPOs for the various applications your company uses to generate data. The more mission-critical the application, the lower (closer to zero) the RTO and RPO should be. The less critical the application, the greater your tolerances will be.

To calculate the right RTOs and RPOs for your organization, consult with business unit leaders and senior management to identify those applications and systems that drive your business and generate the most revenue; these are the most important to keep operational and should have low RTOs and RPOs. Once you’ve created this business impact analysis, you can divide your systems into tiers based on levels of criticality and institute appropriate recovery objectives for each tier.

Execution: Balancing Criticality and Cost

The more stringent the RTO and RPO, the more expensive achieving them can be. For example, if you run a full corporate data backup every day for lower RPO, you’ll consume more storage and network resources than you would if you ran them every week, inflating the expense.

To get a handle on costs, identify your desired RTO/RPO values based on your criticality tiers, then research ways to achieve them as cost-effectively as possible as part of your disaster recovery strategy.

For example:

How often should mission-critical data be backed up? Continuous data replication from primary storage to always-active secondary storage is one route to high availability. This configuration requires high-performance storage systems and maximum network bandwidth, though, so it can get expensive. Once full data backups have been conducted, consider performing incremental backups going forward. These back up new and modified data only to help ensure shorter backup windows while keeping costs down.

Where will the backups reside for quick and easy access? A cloud backup location can be less expensive than building and maintaining an entire secondary IT stack using your own equipment, real estate, and power. However, data backups can also be effectively stored on-premises in another campus building or secondary data center. They can also be stored in the primary data center but in a separate room or at least a different rack for diversity. It should be noted that such backups are less effective in the case of natural disasters that affect an entire site, city, or region than using a geographically diverse site(s) for storing data copies.

Some on-premises backup setups use virtualized storage clusters that distribute databases and file services over multiple nodes, which can each back up multiple workloads concurrently. When more capacity is required, a node is added. The larger the cluster, the more data that can be ingested in parallel, and the shorter the backup window. When these systems are integrated with public cloud infrastructure services it becomes possible to use a hybrid cloud environment for diversity and data protection.

In the face of a disruption, what other actions will be necessary to bring systems back online and how much time will they take? For example, you might need to replace damaged components, reprogram software, and perform system testing, before you’re back in business. In a cloud scenario, you don't have to worry about hardware but using the cloud might increase the amount of work required to re-IP/reconfigure things. Is your disaster recovery plan to failover to a second set of data or to recover in-place? Those are questions you need to answer so that a plan can be devised and executed.

Want to learn more? Learn how to deliver near-zero RTOs to radically accelerate data access and the recovery process in order to restore business operations.

Differences Between RTO and RPO

The recovery time objective (RTO) is the target period of time for downtime in the event of IT downtime while recovery point objective is the maximum length of time from the last data restoration point.