Last week, I covered the importance of quality and why we employed automated end-to-end testing. In this post, I explain how we implement this approach. We do so through a release pipeline orchestrated by Jenkins to efficiently run a large suite of end-to-end tests. These tests leverage our custom testing framework which integrates with support tooling. As we receive customer feedback, we continuously update the framework and test cases to keep up with the latest requirements. Below, I describe in more detail our release pipeline, testing framework, and product support functions that ensure our testing is faster, more efficient, and always high quality.

Jenkins Continuous Integration

Like many engineering organizations, we use Jenkins as our continuous integration tool. As engineers check in new code, Jenkins is continuously running the suite of tests we built, including both unit tests and end-to-end tests. This allows us to quickly detect and correct issues.

Release Pipeline

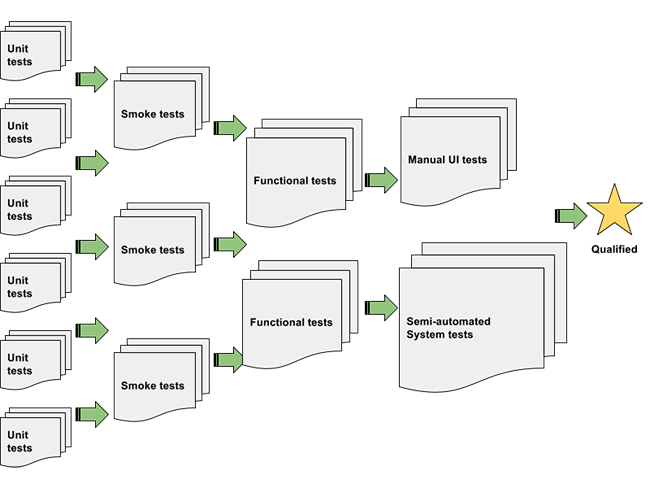

If we ran every full test for every code check in, we would quickly exhaust all our test resources and file duplicate bugs. Although we can easily add more test resources, duplicate bugs waste engineering time by requiring extra triage and diagnosis work. Instead, we define a release pipeline to execute tests in a smarter, more efficient order.

The main significance of this approach is that earlier in the pipeline, there are more tests that are faster and lighter on resource consumption. This enables us to run the tests in parallel to get a very fast and early signal on quality. Only if a build passes all tests in an earlier phase does it then proceed to run tests in later phases. By doing this, we utilize the heavier test resources efficiently by only attempting testing with builds that are unlikely to fail in trivial ways or file duplicate bugs.

It is important to keep in mind that the manual UI and system tests at the end of the pipeline are unique because they require subjective evaluation on overall quality. By using only the builds that passed through the earlier phases in the pipeline, we ensure that the team making these judgments isn’t wasting its time on builds that didn’t pass basic tests.

Test Suite and Automated End-to-End Testing

As seen above, we have many, many test cases which verify all the different features and use cases within Rubrik’s product. Unit tests are the most frequent and exercise individual components. Most other tests are end-to-end tests, including the following examples:

- Basic acceptance test

Verifies that the cluster can be configured from scratch, protect VMs according to SLAs, and use Rubrik features to recover data from snapshots - Data compaction test

Verifies that the cluster will effectively utilize compression, deduplication, expiration, and consolidation to ensure efficient usage of underlying storage - Replication and archival tests

Verifies that when enabled, replication to other Rubrik clusters and archival to cloud storage occurs at the configured time - I/O performance tests

Verifies that I/O on the Rubrik cluster offers expected throughput, IOPS, and IO latency. - Upgrade test

Verifies that performing an upgrade from a cluster with existing data on the previous Rubrik version will continue to support the existing data while also offering new functionality in the upgraded version

RKtests

Above are just a few examples among many fully automated end-to-end test cases that we have. It’s easy for us to write so many test cases because they leverage a rich collection of test utilities included in our custom RKtest framework. This framework is the heart of our test suite, so each of our end-to-end tests are called RKtests.

Framework

The RKtest framework is our extension to standard Python test frameworks. Its extensive collection of utilities enable test cases to quickly and easily perform any of the steps involved in an end-to-end test, such as:

- Deploy Rubrik code and reset it

- Configure a Rubrik cluster consistently based on specifications in a YAML file

- Exercise Rubrik features, such as protecting VMs, instant mount, and granular file recovery

- Collect results and diagnostic data. Analyze them for errors, service crashes, performance metrics, and more

Product Support Functions

Many of the utilities in the RKtest framework would not be possible without a little help from the product. We have deliberately built various support functions into the product which we use for both customer support cases and engineering driven tests. This reuse is effective not only to avoid extra investment in building unnecessary tools but also because it ensures that our experiences during testing accurately reflect the experiences we have in the field. Having this common ground enables us to close the loop connecting customer feedback to engineering processes.

- Rubrik Reset

The reset function in Rubrik nodes restores the node to its initial manufactured state. - rubrik_tool

rubrik_tool is a reference client for the Rubrik REST API. It enables the Rubrik team to test Rubrik features without relying on backdoors, therefore exercising the same functionality as customers. - rksupport

rksupport is a script which triggers automatic collection of a full support bundle on the Rubrik cluster, including diagnostic information.

Incorporating Customer Feedback

The test suite we described above is great for catching errors during tests we wrote. However, we cannot think of all the test cases relevant to customers. Therefore, issues from support calls, rksupport, and alert monitoring are important data sources that contribute to our continuously evolving test suite. As we encounter bugs from customers, we either add new RKtests to cover previously uncovered cases or modify existing RKtests to better match expected behavior from customers. Most of these updates feed enhancements back into the RKtest framework where the entire suite of RKtests can take advantage of them.

In the future, we will continue expanding our pipeline and system for automated testing. We are currently working on a dynamic test resource manager, an in-house test launcher to replace some Jenkins functionality with a customized workflow, and IT automation to provision test resources more quickly and at larger scale. For a look into how we automate, check out our Github open source project for VMware VRO workflows.

Did you miss the 1st part? See automated end-to-end testing part 1, here!