Data backup and data replication are commonly confused. While these processes are related, they’re not interchangeable. Here’s why.

Data backup focuses on restoring data to a specific point in time. Backups run at periodic intervals, creating “save points” of all the data on your production servers. These save points can be restored in the event of file corruption, system failure, outages, or any event that causes data loss. Data is backed up on a variety of media and locations, both on-premises and in the cloud.

Data backups can take up to several hours, so businesses typically schedule backups at night or on the weekends to reduce the impact on production systems. While there’s always a risk of losing data between backups, they still serve as a good standard of data protection and are particularly suitable for storing large sets of static data long term. Backup remains the go-to solution for many industries that must keep long-term records for compliance purposes.

Data replication, on the other hand, focuses on business continuity—delivering uninterrupted operation of mission-critical and customer-facing applications after a disaster. Replication entails making copies of data, synchronizing it, and distributing it among a company’s sites, typically servers and data centers. Transactional and other data is replicated through multiple databases. Replication helps to ensure that you can access critical data and core business applications remotely from a secondary location in the event of an outage or emergency.

Replication is either synchronous (done in real time) or asynchronous (done on a schedule). In synchronous replication, an entire system is replicated and subsequent changes between the primary system and replica are synced simultaneously in real time. There’s no downtime between the point of saving and the changes being replicated. If a disaster strikes, failover to the secondary replicated site is near instantaneous, resulting in little to no application or data loss.

In an asynchronous setup, replication usually occurs on a scheduled basis. Data is saved to the primary storage first, and there’s a slight delay before the backup data is copied over. This form of replication uses less bandwidth and takes less time than synchronous replication.

Because replicated data is updated on an ongoing basis, it doesn’t provide a historical state of a company’s business records like data backup does. Data replication can also be greatly hindered by a malware attack because as data is replicated, so is the malware. In these instances, adequate backup is critical to recovering data at least up to the last save point.

It’s not uncommon for companies to back up everything, from production servers to desktops, and use replication for applications that are critical to keeping the business up and running.

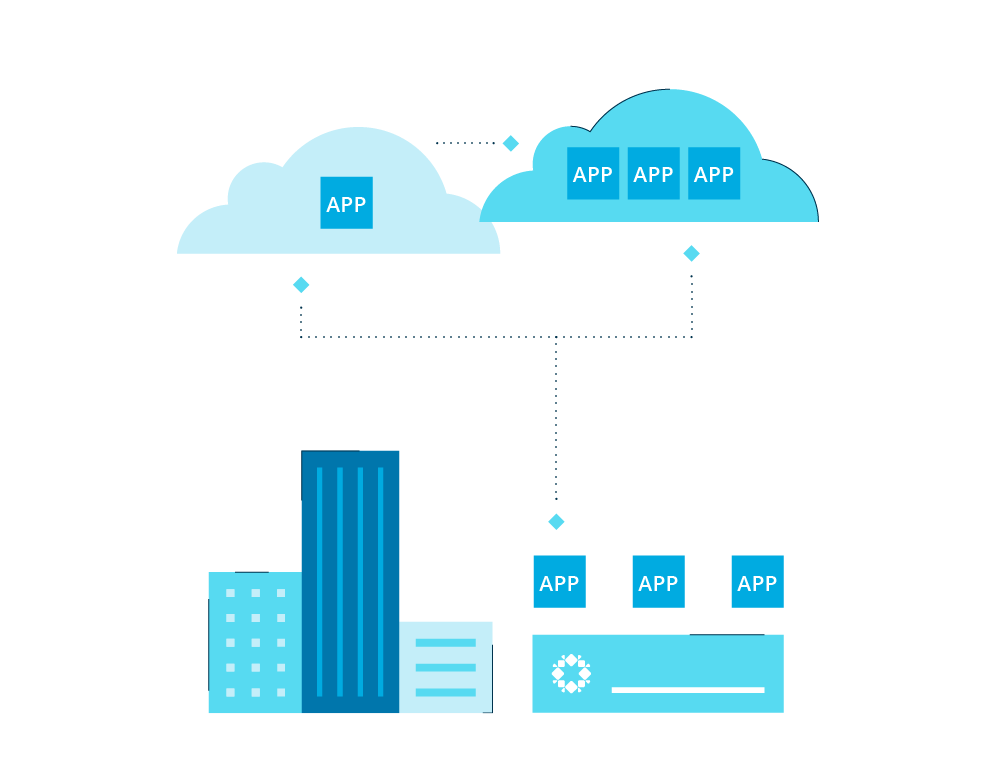

Some organizations replicate their backups. For example, after a backup is performed on-premises, that backup data is replicated (usually asynchronously) to a secondary data center or cloud provider.

Data Replication and Data Recovery

You can’t devise a data replication strategy without determining your acceptable recovery time objective (RTO) and recovery point objective (RPO). RTO refers to the period of time an application can be down before it impacts business operations. RTO measures the duration from loss to recovery. RPO refers to the amount of data you can afford to lose before business operations are impacted. It’s a measurement from the time of the data loss to the most recent backup.

Most industries, whether healthcare, e-commerce, or financial services, rely on 24/7 data and application availability—especially during a system outage or natural disaster. A downed enterprise resource planning (ERP) system, for instance, can bring a company’s production and distribution functions to a screeching halt. One hour of data loss at a bank with live transactions can be irreparable. To prevent critical data loss, it’s important for your disaster recovery solution to be able to replicate data in real time.

Continuous data protection can also provide additional assurance. Enabled by automation, continuous data protection maintains an ongoing journal of data changes so you can restore a system to almost any previous point in time.

Backup, Data Replication, and DR in the Cloud

Increasingly, enterprise data is being spread across multiple locations on-premises and in the cloud, with corresponding hikes in data management complexity. Cloud-based disaster recovery (DR) is significantly more cost effective and easier to manage than DR that relies solely on your on-premises infrastructure. You can replicate your data and applications environment to the public cloud, for example, and quickly failover to the cloud replica in the case of a production failure.

An all-in-one data protection solution like Rubrik’s makes the cost and time savings even greater.

Help reduce daily management time from 40 to 90%. You can use one policy engine to create and automate backup, data replication, and archival policies with just a few clicks.

Shrink your backup hardware footprint up to 70 percent.

Get your data back online in minutes.

Deliver automated replication for virtual and physical environments for instant recovery.

Customize RPOs and retention values to meet near-continuous data protection or long-term off-site archival needs.

Extend RPOs across your data centers and achieve near-zero RTOs.

Every business has its own needs when it comes to backup and disaster recovery. There isn’t a one-size-fits-all solution. Whichever disaster recovery solution you choose, remember that backup for data protection and long-term storage and replication for data availability and fast recovery are complementary technologies. Both should factor into your comprehensive disaster recovery planning.

Learn more about the agility and economics of replication and disaster recovery in the cloud.

Frequently asked questions

What is the difference between replication and migration?

The replication process involves moving company and user data between machines or servers for ongoing use while migration is a one time process that may include data transformation.

Why data migration is needed?

Data migration may be required when changing or upgrading IT systems to include additional services while protecting data integrity with minimal downtime.