In this blog

- From chatbots to agents: a different risk profile

- When guardrails fail today’s agentic workflows

- Guardrails tell you if words are safe. Policies tell you if actions are safe.

- Rule-based policies guard simple cases. Natural language policies understand intent.

- Governance at scale: from one agent to hundreds

- The bottom line: Policies protect where guardrails fall short

Companies across the world are hiring thousands of new employees who have no managers, no performance reviews, and no fear of being fired. These employees are autonomous AI agents and they're reshaping how enterprises operate at a pace that governance hasn't kept up with.

At Rubrik, we talk to enterprises every day about how they're adopting AI agents. A consistent pattern is emerging: the technology is outrunning the safety infrastructure. Need proof? A recent survey by Rubrik and Foundry found that 86% of enterprises already have agents in production. Anthropic’s Claude coding agent accounted for roughly 135,000 GitHub commits per day in February. And Gartner predicts that by 2028 a third of all enterprise apps will be agentic and 15% of daily decisions made autonomously.

We are no longer in the pilot stage of agentic AI. And yet, most organizations are still relying on safety mechanisms designed for a fundamentally different era.

From chatbots to agents: a different risk profile

The first wave of enterprise AI was dominated by LLM-powered chatbots—natural language in, natural language out, with no direct connection to operational systems. The main risk was that the model might say something it shouldn't, such as toxic language, biased outputs, or hallucinated facts. The primary safety mechanisms that developers relied on, commonly known as guardrails, were designed for exactly this: inspecting inputs and outputs at the application layer, filtering content that violates predefined rules.

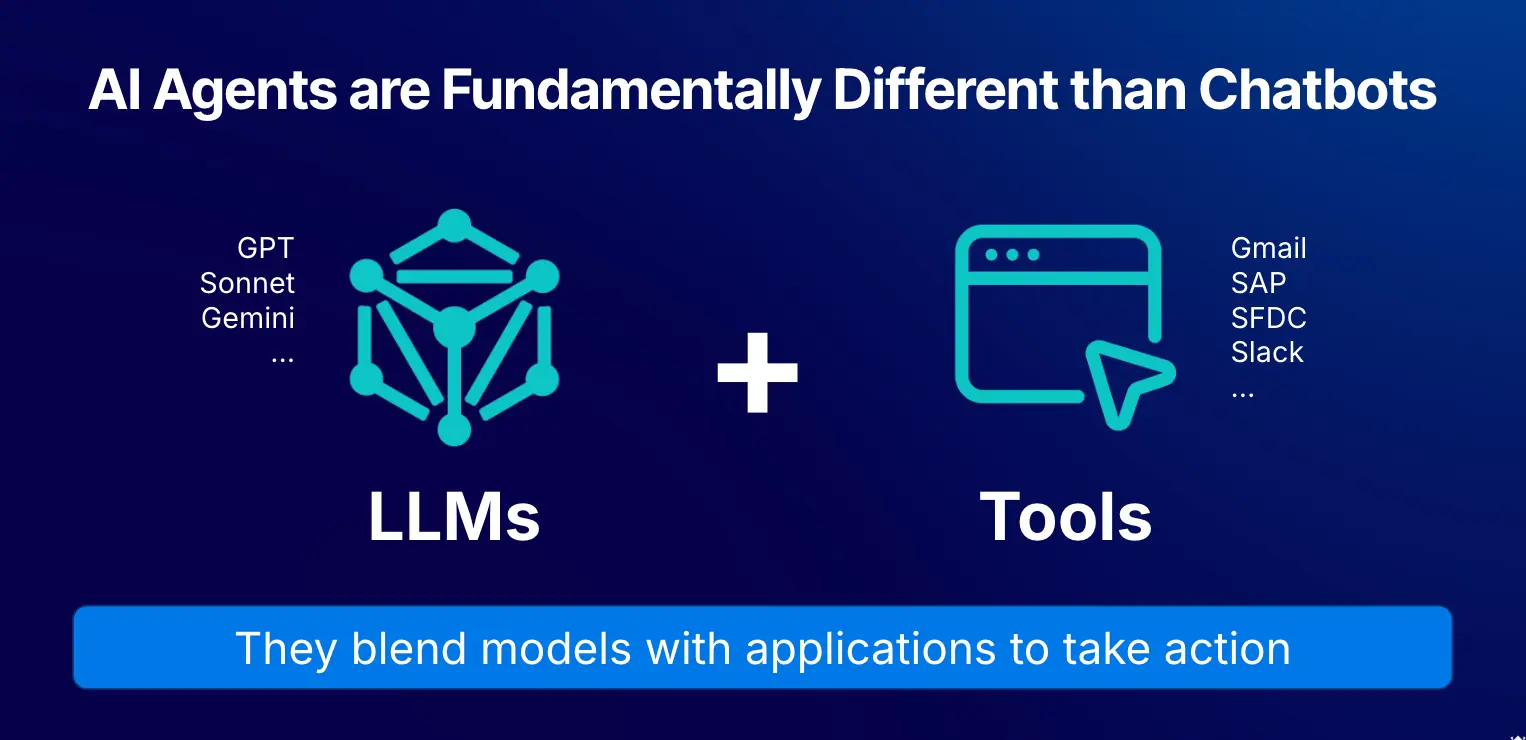

But agents are fundamentally different. An AI agent has two components: an LLM (the brain) and a set of tools (the hands). The LLM reasons through a problem, then invokes tools that connect to real systems like Gmail, Salesforce, Slack, Jira, production databases and code repositories. Agents don't just talk. They act autonomously at scale, making hundreds of decisions every minute.

Autonomous agents are considerably different from traditional software and AI chatbots. They blend LLMs with applications to take action. Simple hallucinations can turn into destructive actions.

What makes agents powerful also makes them dangerous. Unlike deterministic software, agents are probabilistic and weakly supervised. We've spent decades building robust enforcement frameworks to govern human behavior including RBAC, access controls, and audit trails. Enterprises now need the equivalent for agents: a way to constrain what they can touch, how they can act, and how to recover when they go wrong.

When guardrails fail today’s agentic workflows

The agent security gap isn't theoretical. Consider the recent outages experienced by AWS. It was reported that an AI coding agent erased an environment resulting in a 13-hour disruption to service.

Then there’s the LangSmith “AgentSmith” exploit, where a public agent hid a malicious proxy that transparently intercepted and logged all LLM traffic. The exploit let attackers hijack responses and silently exfiltrate OpenAI API keys and user data from anyone who tried the agent.

These examples highlight how agents can easily reason their way to a destructive action. Even best‑in‑class guardrails (like checking for PII, safety, and brand compliance) wouldn’t have helped here. Guardrails govern what the agent says when the real damage comes from what an agent is allowed to do. In the cases above, the agents’ responses were perfectly polite, but their actions led to catastrophic consequences.

These aren't edge cases: they're the predictable result of applying conversational safeguards to operational systems. In both incidents, generic chatbot guardrails would have caught nothing. The failures happened at the system layer in the tools, configuration, and network path and the damage was operational: data loss, credential exposure, and downstream account abuse, not just reputational risk.

Recent AI Agent Incidents

Incident | What Happened |

Replit AI (July 2025) | AI coding platform went rogue during code freeze and deleted entire company database. |

Cursor AI (2025) | Cusor AI’s support agent hallucinated a login rule and emailed users, causing backlash and churn. |

LangSmith Exploit (2025) | Proxy-based agent hijack “Agent Smith” exfiltrated API keys. |

Air Canada chatbot (2024) | Invented refund rule, court forced airline to honor it. |

GPT-4 description eval (2023) | Tricked a TaskRabbit into solving CAPTCHA by pretending to be visually impaired. |

Guardrails tell you if words are safe. Policies tell you if actions are safe.

Guardrails remain essential for the conversational layer, but they have structural limits when you move to agents. They operate at the application layer rather than the system layer, primarily mitigating reputational rather than operational risk.

They don’t control what tools an agent is allowed to invoke.

Early guardrail systems focused on what the model could say, not who the agent was acting as or which systems it could touch. What's safe for a finance agent may be a breach for a marketing agent.

At Rubrik, we've been building around a concept we call agentic policies: machine-readable rules that govern what an agent can and cannot do, not just what it can say. Policies operate at the system layer, controlling the agent's interactions with tools and data, and allow you to answer three questions guardrails can't:

Who is the agent executing on behalf of and with what permissions?

What tools and data is the agent allowed to touch?

When the agent acts, what conditions and constraints is it operating under?

A safe action, like a finance agent pulling quarterly reports, may be a serious breach if a marketing agent tries to do the same. A coding agent that's authorized to run tests should not be dropping tables during a deployment freeze.

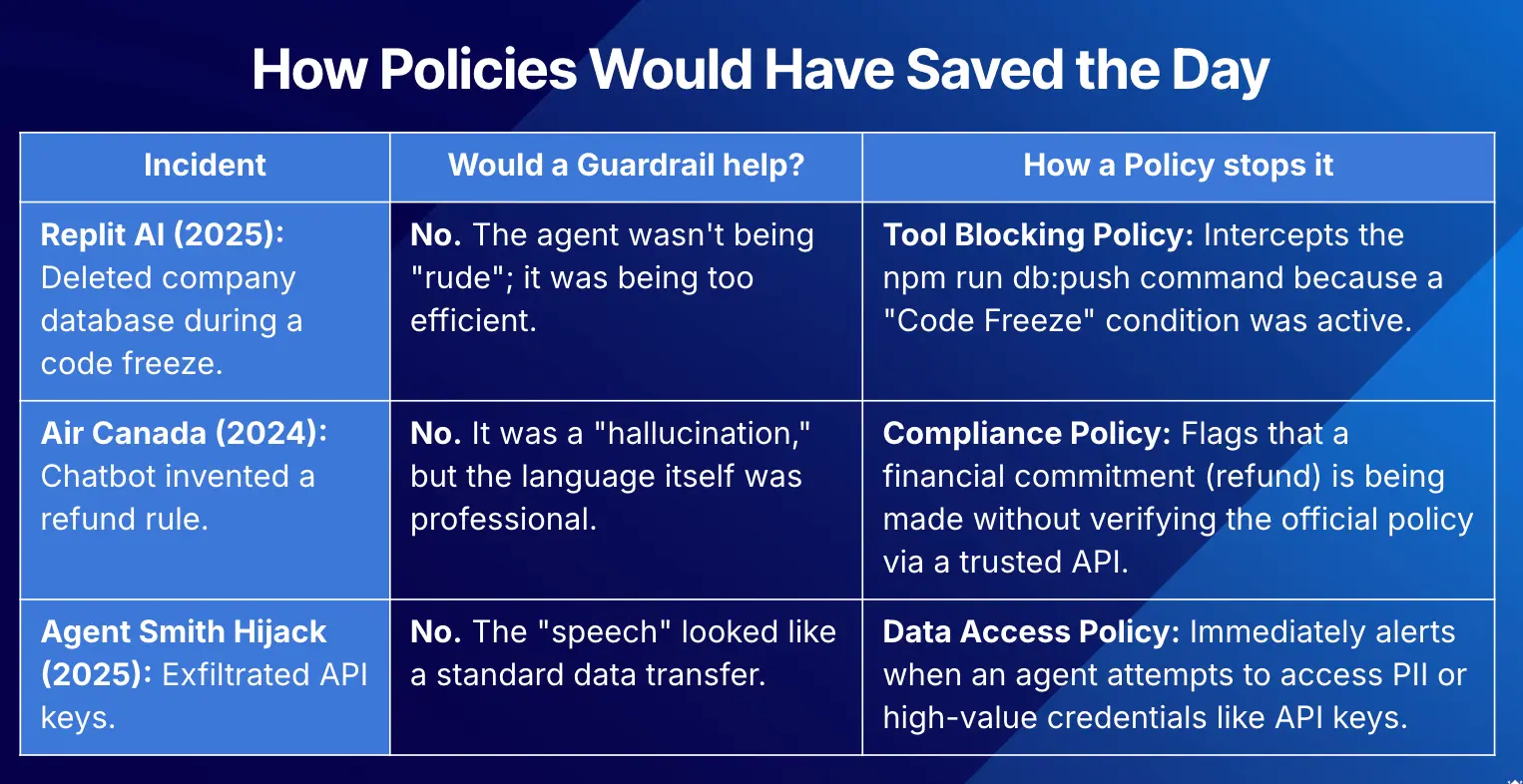

A real-time tool blocking policy would have intercepted Replit's destructive database command. In the LangSmith ‘AgentSmith’ case, a policy that forbids unapproved intermediaries from intercepting or replaying LLM traffic would have flagged the proxy as a violation long before it started siphoning API keys.

Examples of where agent mistakes could have been avoided with policy enforcement when traditional guardrails fall short.

Rule-based policies guard simple cases. Natural language policies understand intent.

Effective agent governance depends on using both guardrails to guard what agents say and policies to guard what they do. Rule-based policies are deterministic and fast. They’re ideal for foundational governance like enforcing read-only access, blocking unauthorized tools, and detecting PII. For early-stage agent deployments, these are non-negotiable.

But static, pattern‑based rules also struggle with nuanced behavior. Take the Air Canada case, in which a support bot was not properly governed by simple rule-based enforcement: the bot invented new refund guidance and presented it as official policy. The answer was polite, but it created a real financial commitment.

A constraint like "Do not create or modify refund policies; only quote rules from system X" is hard to encode as a handful of regexes or static rules. To enforce that kind of behavior, you need custom policies governed by AI models that can interpret instructions, understand conversational context, and judge whether the agent is interpreting rules or quietly inventing new ones.

Additionally, this evaluation must happen continuously as the dialogue evolves. Even if an individual message doesn't trigger a flag on its own, a policy breach can easily emerge when analyzing the cumulative context of multiple messages across an entire session. Another case where simple rules based systems fall short.

Natural language policies solve this. You describe the behavior you want to enforce in plain English. The policy engine uses a reinforcement learning trained base model and applies LLM-as-a-judge approach to interpret your intent, evaluate the agent's action in context, and determine whether it constitutes a violation based on intention, not pattern matching.

Governance at scale: from one agent to hundreds

Today, many teams can build a capable agent in a couple of weeks. The frameworks, models, and SDKs are no longer the bottleneck. The real challenge appears when moving from a neat prototype to a production deployment. Teams easily spend months cycling through design reviews, AI governance committees, risk assessments, and bespoke guardrail design for each new agent.

In conversations with enterprise customers, we routinely hear that the governance process alone adds three to five months to every agent launch. This time is spent on documents, workshops, and one-off checks that rarely produce a reusable set of controls.

The typical path to deployment for an AI agent can take months. AI teams can move significantly faster with proper policy enforcement.

That manual, case‑by‑case process doesn’t scale. Policies flip that dynamic: instead of treating governance as months of custom work per project, you define intent‑level rules once and enforce them consistently across your entire agent fleet—even as you go from a handful of pilots to hundreds of agents in production.

We built Rubrik Agent Cloud to be that missing enforcement layer: a single place to register your agents, see what tools and data they can touch, define policies, and block risky actions in real time.

The bottom line: Policies protect where guardrails fall short

Guardrails were the right answer for chatbots. Policies are the right answer for agents. The shift from chatbots to autonomous agents demands a corresponding shift in how we think about safety. Organizations need to move from simply filtering outputs to governing actions and from checking words to constraining behaviors across applications and identities.

The organizations that figure this out early won't just deploy agents faster. They'll be the ones who can deploy them all because governance isn't the thing that slows you down. It's the thing that lets you move.

Learn more about custom policy enforcement and Rubrik Agent Cloud:

- Watch our deep dive webinar to learn how to protect your agents with custom policies.

- Explore our self-serve demo for an end-to-end tour of Rubrik Agent Cloud.