Most enterprise governance systems were designed for a world where software behaves predictably. You write a rule. The system follows it. If the input matches the pattern and the rule fires. This worked for decades because traditional software is deterministic—the same input always produces the same output.

AI agents shattered that assumption. And the governance systems designed for the old world are already failing in the new one.

Static rule engines, prompt filters, and manual review processes suffer from a fundamental limitation: they can't understand intent. They can't interpret context. And they can't keep pace with agents that generate thousands of semantically unique interactions per hour.

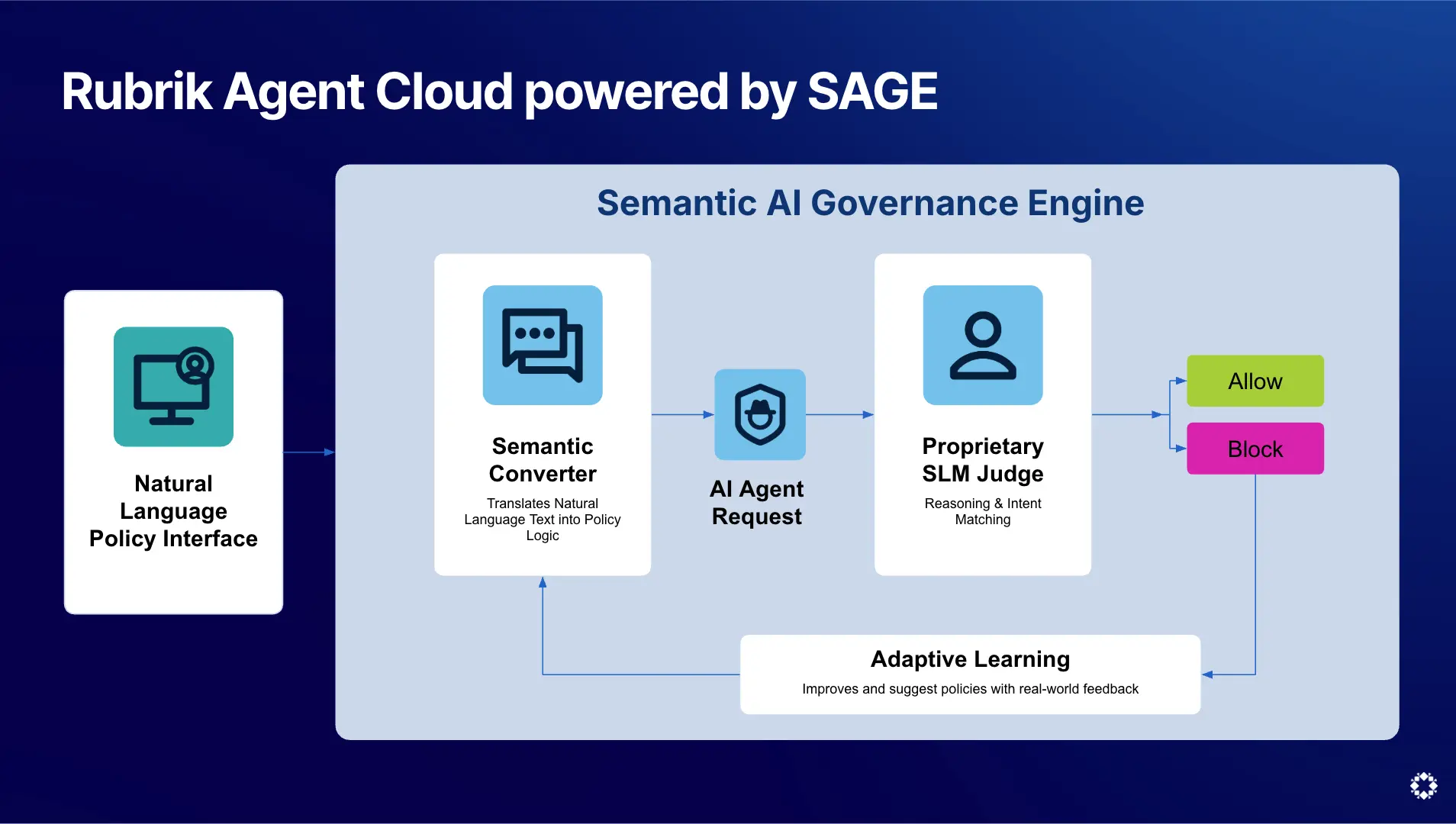

This is why Rubrik built SAGE—the Semantic AI Governance Engine. SAGE represents a fundamentally different approach to agent governance, designed specifically for the non-deterministic, language-driven reality of agentic systems.

What Semantic Governance Actually Means

Consider a straightforward enterprise policy: "Do not share financial advice with customers." In a rule-based system, you'd implement this as keyword matching. Block responses containing words like "invest," "stock," "portfolio," or "financial planning." But an agent helping a customer understand their invoice might use the word "balance." A support agent explaining payment terms might reference "interest." A perfectly legitimate response gets blocked because the rule lacks context.

Now consider the inverse. A clever prompt might get an agent to provide detailed investment guidance without ever using a flagged keyword. The agent could say "Based on current market conditions, you might want to consider allocating more toward technology sector exposure" without triggering a single keyword filter. The policy is violated, but the rule never fires.

SAGE doesn't match keywords. It understands meaning. When an organization defines a policy in natural language ("Do not provide financial advice") SAGE interprets the semantic intent of that policy and translates it into enforceable machine logic. It understands that a response discussing investment strategy is financial advice even if it never uses the word "financial." And it understands that explaining an invoice balance is not financial advice, even though it references money.

This distinction is critical at enterprise scale. Organizations deploying dozens or hundreds of agents across customer support, internal operations, development workflows, and data analytics can't manually tune keyword lists for every interaction pattern. The diversity of agent outputs is too vast. The edge cases are too numerous. The language is too dynamic.

How SAGE Works: The Architecture

SAGE operates on three core capabilities that work together to deliver adaptive governance.

Natural Language Policy Creation: Organizations define governance policies in plain language, not regex patterns or rule syntax. Security teams describe what they want to prevent, and SAGE interprets the intent behind those descriptions.

Semantic Policy Interpretation: SAGE translates natural language policies into enforceable logic by understanding the meaning—not just the words—of both the policy and the agent interaction being evaluated. This is powered by a proprietary Small Language Model (SLM) purpose-built for governance tasks.

Adaptive Policy Improvement: SAGE doesn't just enforce policies, it analyzes them. When a policy definition is ambiguous or incomplete, SAGE recommends improvements. For example, if an organization sets a policy of "Do not give financial advice," SAGE might recommend clarifying the scope: should this include investment recommendations, tax advice, or financial planning? This feedback loop makes policies more precise over time.

Why a Purpose-Built SLM Matters

Why not use a general-purpose LLM for policy enforcement? The answer comes down to latency, accuracy, and cost.

SAGE's proprietary SLM is optimized specifically for governance tasks. In benchmark testing, it achieved higher accuracy than GPT-5.2 at detecting policy violations, while running ~5x faster. For a system evaluating every agent interaction in real time, reducing that latency is the difference between governance that works in production and governance that creates an unacceptable bottleneck.

General-purpose models carry overhead that governance doesn't need. SAGE's SLM is lean, fast, and built for one job: understanding whether an agent interaction violates a policy's intent.

The Shift from Monitoring to Understanding

The broader industry shift happening here mirrors what happened in cybersecurity. Early firewalls were rule-based. They blocked specific ports and IP addresses. Modern security platforms use AI to understand the intent behind network traffic, user behavior, and data flows. The same evolution is now happening in agent governance.

SAGE represents that evolution for the agentic world. It's the core engine powering the governance layer of Rubrik Agent Cloud. It’s the difference between governance that blocks legitimate agent behavior and governance that understands what should and shouldn't happen.

In Part 2 of this deep dive, we'll go further into SAGE's technical architecture—how the SLM evaluates interactions, how policy enforcement integrates into agent runtime, and how adaptive governance creates a continuous improvement loop. For security architects and AI engineers building agent infrastructure, that's where the real engineering detail lives.

Explore SAGE and Rubrik Agent Cloud at ai-team@rubrik.com.