Congratulations to “Godfather of AI” Geoffrey E. Hinton who was just awarded the 2024 Nobel Prize in Physics! Hinton’s foundational discoveries in machine learning and artificial neural networks formed the core innovations that have ultimately led to gernerative AI (GenAI) as we know it today.

Thanks to these breakthroughs, businesses can deploy knowledge-based copilots to enhance productivity and optimize business operations. Content creation has been super-charged and can reach micro-niche audiences via intelligent targeting. Multi-lingual virtual agents offer users help through applications. Developers build with optimized code, generated by an AI.

But alongside these remarkable advancements is a growing need for data security. AI models often rely on massive datasets that may contain sensitive, personal, or proprietary information. If not secured properly, this data could be vulnerable to breaches, misuse, and ethical violations.

As good data stewards, we must ensure that these AI models are consuming the right data and using it in the right way. The risks of neglecting data security are becoming clearer every day—and developers and companies must take preventive measures to ensure their AI’s don’t inadvertently leak sensitive and proprietary data.

Variability is a key feature of generative AI. But data governance teams may be concerned about what the systems may disclose. Even though the output is non-deterministic, if users avoid feeding the models with unintended data and restrict access to certain information for AI augmentation approaches like retrieval-augmented generation (RAG), leakage of sensitive information can be better prevented.

Understanding the Threat Landscape

Examples abound of data breaches and data security related issues with GenAI. According to one report by RiverSafe, one in five UK companies have had potentially sensitive corporate data exposed via employee use of generative AI. The data leak risks of unmanaged GenAI help explain why three-quarters of respondents claimed that insiders pose a greater risk to their organization than external threats.

Organizations like Open Worldwide Application Security Project and MITRE keep track of the risks associated with generative AI. Indeed, MITRE ATLAS (Adversarial Threat Landscape for Artificial-Intelligence Systems) details the various tactics and techniques adversaries are using against Al-enabled systems. These examples are based on real-world attack observations and realistic demonstrations from Al red teams and security groups.

One of these tactics is “Persistence” which describes ways an adversary can maintain access to a compromised system, despite a security team’s best efforts to evict them. One way to maintain persistence is to poison the datasets used by a machine learning model, injecting vulnerabilities into that can be activated later. One such attack, called PoisonGPT, demonstrated how to successfully modify a pre-trained LLM to return false facts. As part of this demonstration, the poisoned model was uploaded to the largest publicly-accessible model hub to illustrate the consequences posed to the LLM’s supply chain. As a result, users who downloaded the poisoned model were at risk of receiving and spreading misinformation.

OWASP publishes a list of Top 10 issues for both LLMs and ML to educate developers, designers, architects, managers, and organizations about the potential security risks associated with LLMs. The lists detail vulnerabilities such as prompt injections, data leakage, inadequate sandboxing, and unauthorized code execution to illustrate the potential impact, ease of exploitation, and prevalence in real-world applications. The goal is to raise awareness of these vulnerabilities, suggest remediation strategies, and ultimately improve the security posture of LLM applications.

Microsoft also called out AI data security in their recently published Digital Defense Report for 2024. The report makes it very clear that generative AI apps deployed on ungoverned data estates can overshare or leak sensitive data. The report also found that 83% of organizations suffered multiple data breaches, putting that sensitive data at risk. According to the report: “It is difficult to protect data from AI-related security risks given that many organizations don’t actually know where—or even what—their sensitive data is.”

Regulators work to keep pace

Fortunately, these new AI-related security threats are not going unnoticed. Around the world, multiple authorities are monitoring the emergence of new threats and proposing regulatory regimes to help security teams respond.

For example, in 2021 the EU AI Act included a strong focus on ensuring the safety, transparency, and accountability of AI systems. While data security was not the primary goal of these rules, it contains several provisions concerning risk-based classification, data governance requirements, the focus on transparency and accountability, and alignment with the General Data Protection Regulation (GDPR).

In the UK, the Department for Science, Innovation and Technology commissioned Grant Thornton UK LLP and Manchester Metropolitan University to develop an assessment of the cyber security risks to AI. One of the findings was that weak access controls can be exploited by attackers to gain unauthorized access, potentially modifying AI models, leaking sensitive data, or disrupting the development process. These and other assessments led to the Generative AI Framework for His Majesty's Government with an emphasis on data security, and data protection.

In the United States, President Biden issued a wide-reaching executive order on safe, secure and trustworthy AI, and just a few days ago the Biden-Harris administration signed the first National Security Memorandum on AI, which outlines government guardrails for how AI should be used and protected. Federal organizations like National Institute of Standards and Technology and Cybersecurity and Infrastructure Security Agency continue to present frameworks for managing AI risk and roadmaps for secure AI usage, further emphasizing the need to stay diligent when securing the safety of these powerful capabilities. And state-level regulations such as California’s Safe and Secure Innovation for Frontier Artificial Intelligence Models Act add further guidance for US-based organizations.

It is clear a balance needs to be struck between the desire to innovate and the need to protect sensitive information. But the need is profound, and industry can work with regulators to deploy technology that can do both.

How is the industry rallying around this issue?

Individual vendors are of course paying a lot of attention to these issues. For example, the Microsoft Security Response Center (MSRC) has supplemented their Security Development Lifecycle (SDL) bug bar for traditional security vulnerabilities to triage for AI/ML-related security issues. And Google has introduced the Secure AI Framework to address top-of-mind concerns for security professionals, including—such as AI/ML model risk management, security and privacy—to help create AI models that are secure by default. Gartner created a framework to manage AI risks called TRiSM (Trust, Risk, Security Management) to promote the integration of much-needed governance upfront and proactively ensure AI systems are compliant, fair, reliable, and protect data privacy.

The LLM model providers themselves are also acknowledging the need for more robust data security and have started publishing policies to address some of these concerns going forward.

The role of data security

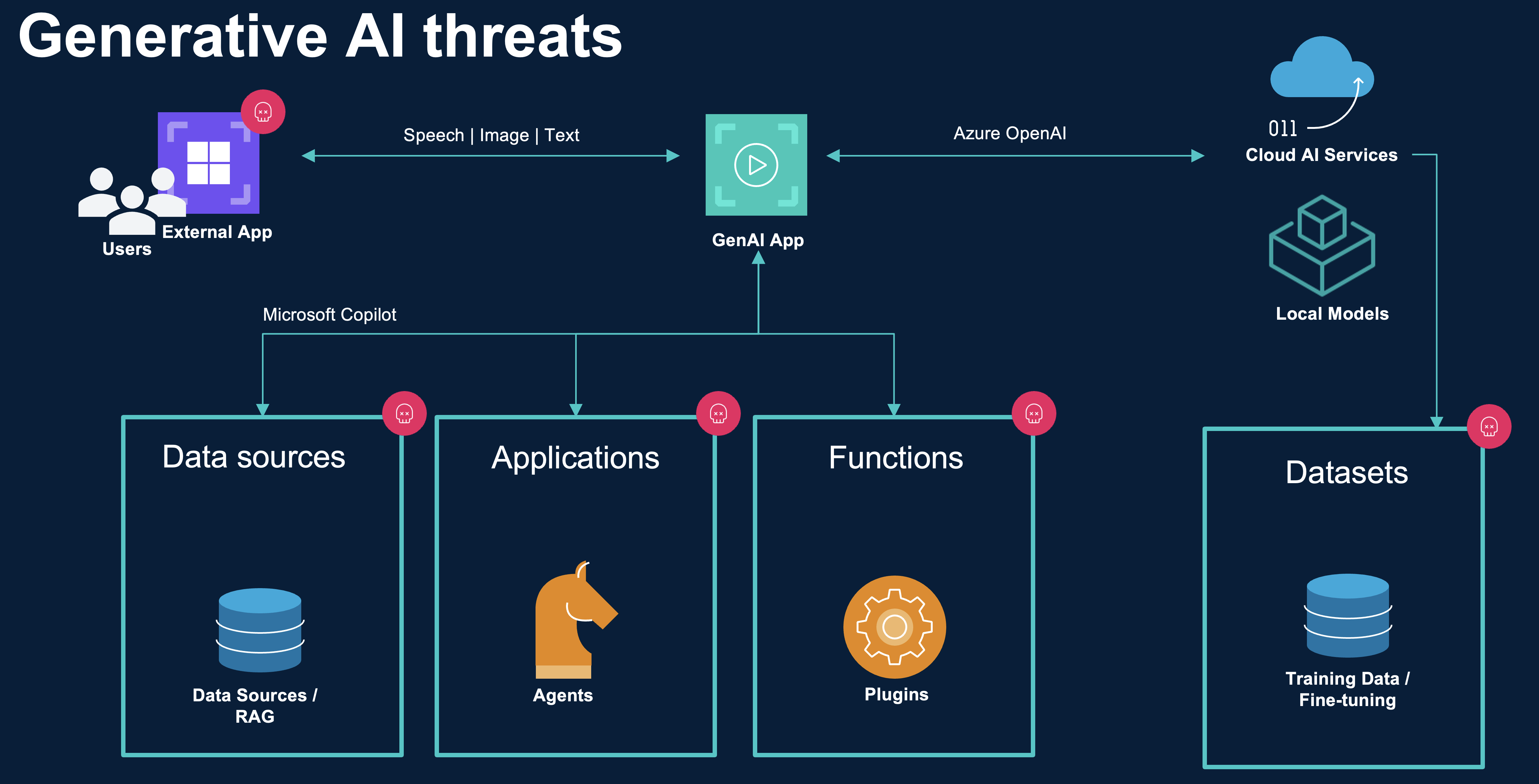

Enterprise generative AI exposes many potential ways that weak or mis-configured data security can lead to unintended negative outcomes.

A typical enterprise architecture GenAI has several places where new security policies should be applied to avoid data leakage (and other security-related issues)—particularly where GenAI integrates with data with application workflows. To safely leverage your own enterprise datasets for either LLM training or fine-tuning, you need to ensure only relevant data is used.

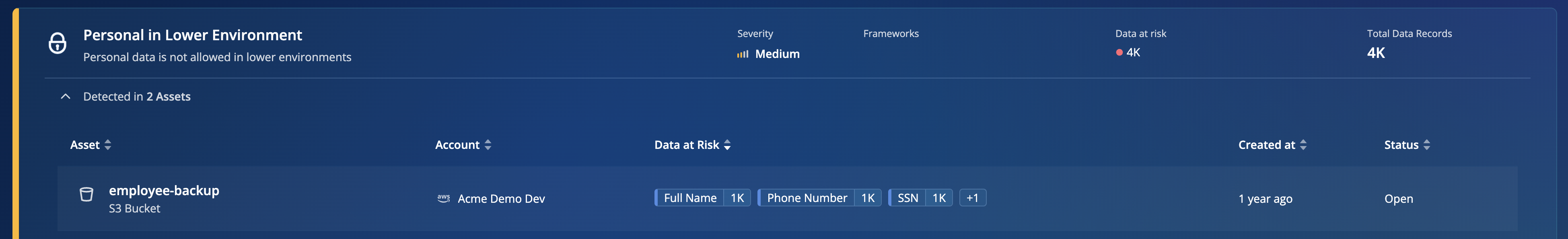

For example you might want to exclude certain regulatory sensitive data, or data that can lead to biased outcomes. Using Rubrik Data Security Posture Management (DSPM), we can track this data in developer environments and create policies that govern which type of data we want to allow for the creation of our LLMs.

Similarly, for data you want to augment (using RAG for example) your GenAI results need to be governed as well. Rubrik DSPM can validate these content stores before they are used for embedding into Vector Databases and become part of the GenAI app response.

Once you have a working or fine-tuned mode, you might also want to consider limiting its sanctioned use. Rubrik DSPM can, for example, detect if these models are attached to a Cloud VM that was spun up either intentionally or accidentally and prevent model-theft.

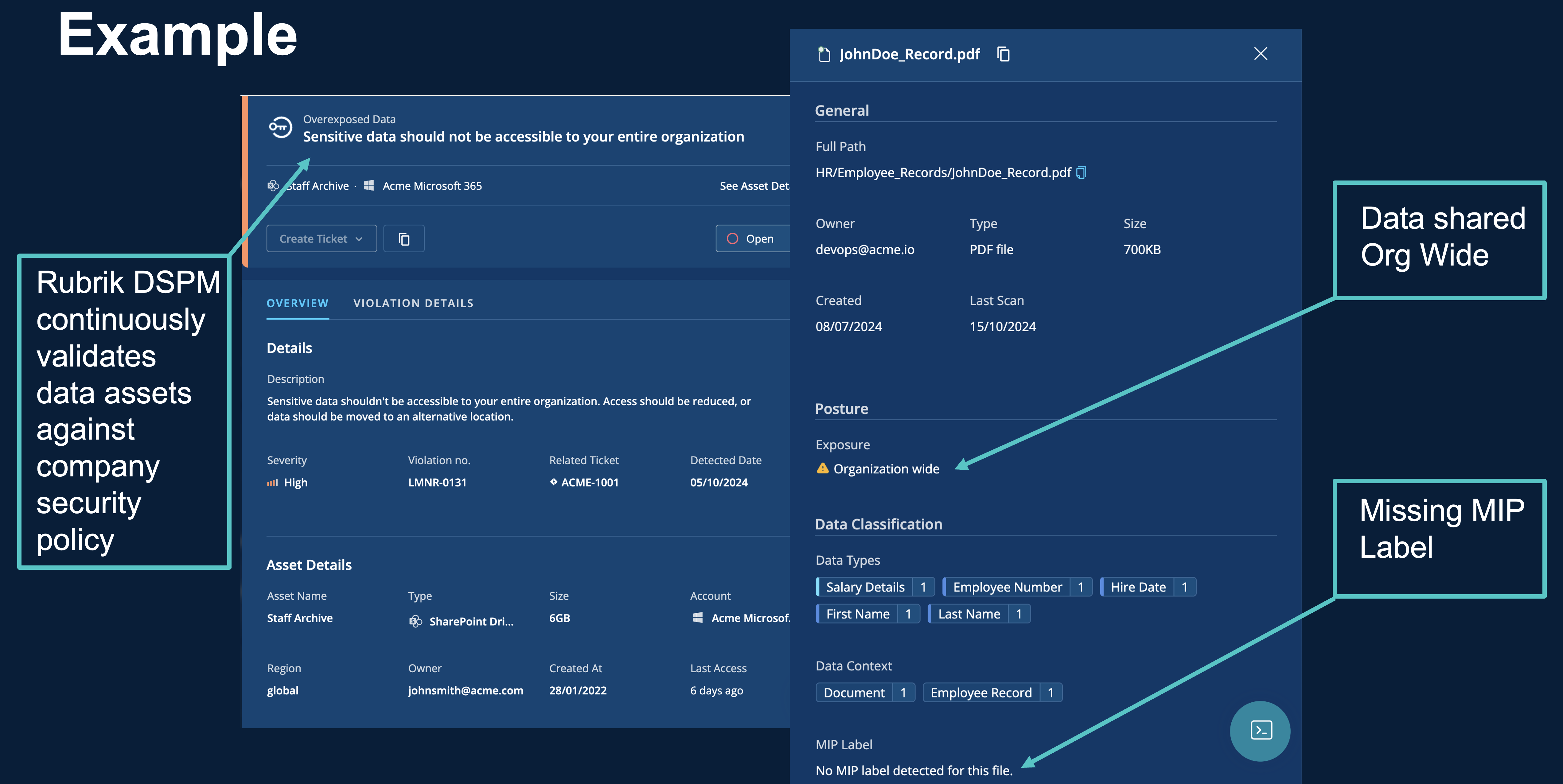

Even when using SaaS-based GenAI capabilities like those available through Microsoft 365 Copilot, data security remains a critical concern. Microsoft 365 Copilot uses existing controls to ensure that data stored in your tenant is never returned to the user or used by a large language model (LLM) if the user doesn't have access to that data. To implement this, Microsoft relies on sensitivity labels from your organization applied to the content through Microsoft Purview. As such, the lack of labels, or any potential mislabeling, can have serious consequences that are hard to undo after you have turned on Copilot. Additionally, overexposed data may be shared too broadly or data in organisationally public repositories like SharePoint can have an impact on the output of Microsoft 365 Copilot.

Rubrik DSPM has the capability to validate overexposed data intended for usage through solutions like Copilot. It also understands if data is missing these sensitive data labels which would prevent it from inclusion in user specific retrieval.

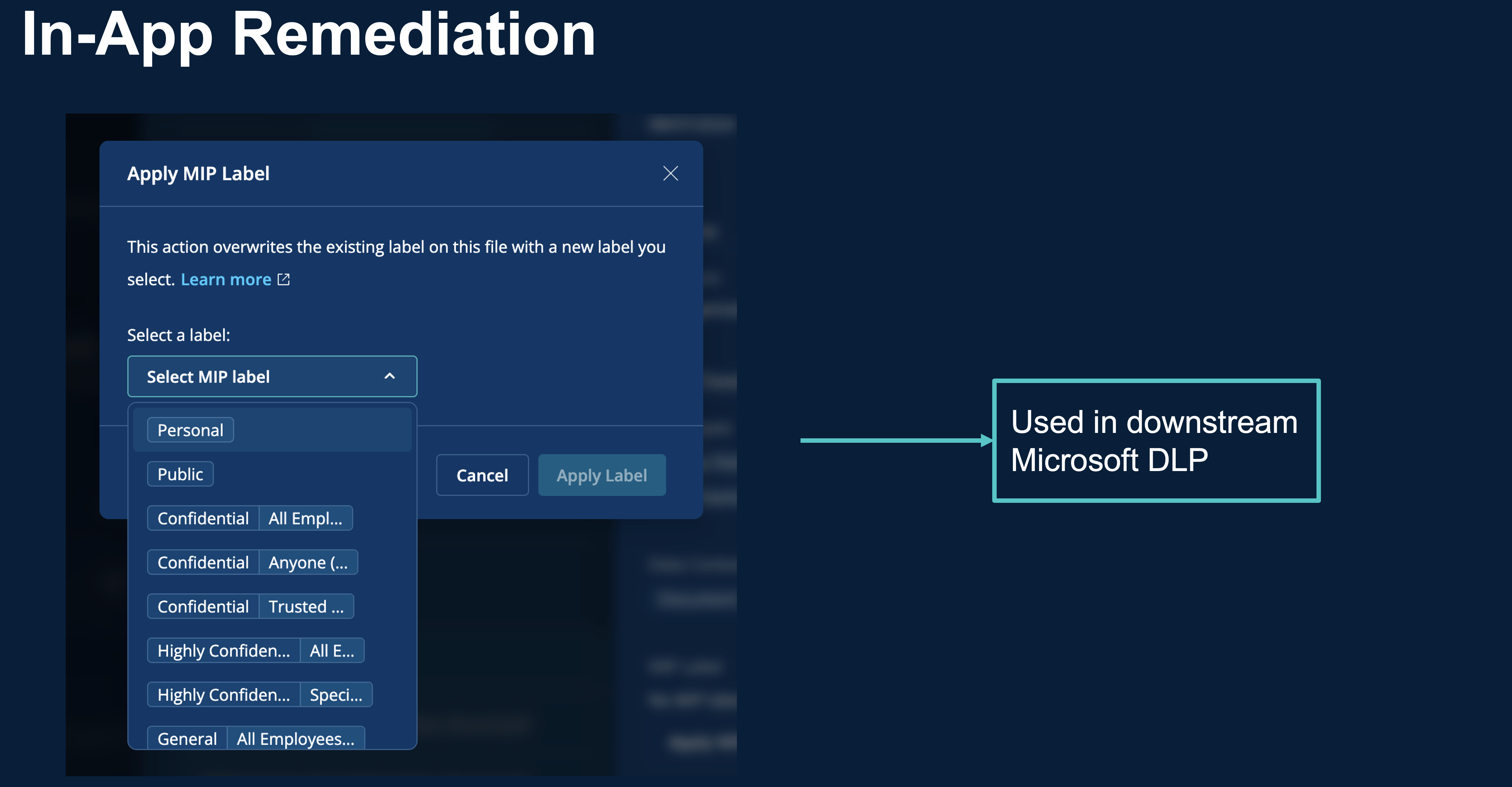

Rubrik DSPM also provides in-app remediation for missing sensitivity labels using our Microsoft Purview integration. This means data can be quickly labeled and any potential GenAI project slowdown caused by data security concerns can be addressed appropriately.

What now?

The Norwegian Nobel Committee has sent a clear signal with its recognition of Geoffrey E. Hinton; AI is transformative, valuable, and here to stay. Understanding that reality means facing new risks with clear eyes. Proper data security is not just a safeguard against these risks—it's a catalyst for the widespread adoption of generative AI in enterprise settings. By implementing robust security measures, organizations can unlock the full potential of AI.

Proper data security is not a roadblock but a springboard for generative AI in the enterprise. By prioritizing data protection, organizations not only safeguard themselves against potential threats but also pave the way for faster, more comprehensive AI adoption. As we move forward in this AI-driven era, those enterprises that master the balance between innovation and security will be best positioned to harness the transformative power of generative AI.