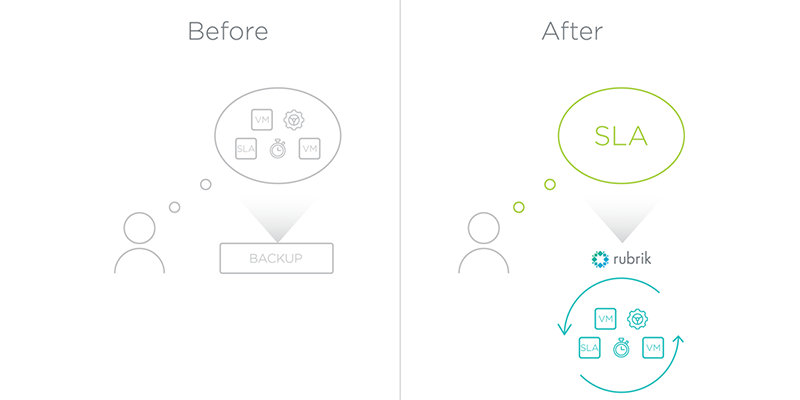

One of the topics du jour for next-generation architecture is abstraction. Or, more specifically, the use of policies to allow technical professionals to manage ever-growing sets of infrastructure using a vastly simpler model. While it’s true I’ve talked about using policy in the past (read my first and second posts of the SLA Domain Series), I wanted to go a bit deeper into how a declarative policy engine is vastly different from an imperative job scheduler. And, why this matters for the technical community at large.

This post is fundamentally about declarative versus imperative operations. In other words:

- Declarative – Describing the desired end state for some object

- Imperative – Describing every step needed to achieve the desired end state for some object

Traditional architecture has long been ruled by the imperative operational model. We take some piece of infrastructure and then tell that same piece of infrastructure exactly what it must do to meet our desired end state. With data protection, this has resulted in backup tasks / jobs. Each job requires a non-trivial amount of hand holding to function. This includes configuration items such as:

- Which specific workloads / virtual machines must be protected

- Where to send data and how to store that data (compression, deduplication, etc)

- When to purge backup files from the storage

- How to schedule the backups and in which order (fulls, reverse fulls, periodic fulls)

- How to connect to the storage target, be it protocols or access methods

- Catalog details for in-guest file indexing, along with application specific requirements

- Proxy node / pool selection for data ingest

- The use of ancillary methods for quiescence and the associated credentials

- And so on …

The list is often quite long and encompasses hundreds of pages of documentation. The problem remains, however, that each backup job is treated like a pet. It is hand crafted, cared for, and if there are issues with the job, an administrator must triage the job to determine where failure occurred (along with re-running the job at a later date).

The sticky world of imperative operational models

Using the declarative model drastically changes this paradigm. It allows technical professionals to plug in their desired state for an object – in this case, the data protection policy for virtual machine workloads – into a policy engine. The policy engine is elegantly simple because all of the imperative details are abstracted away and handled by an incredibly smart, scale-out system. The resulting input fields are reduced to:

- The Recovery Point Objective (RPO) requirement

- Retention periods for the aforementioned RPOs

- Any archive targets, if desired

- Any replication targets for near-zero Recovery Time Objective (RTO) requirements, if desired

Not only are these fields simple to understand and fulfill, but they are closely coupled to business language. While your CEO may or may not understand S3 object storage and deduplication algorithms, they are all going to be quite comfortable with terms like RPO and Availability.

Policy is logically assigned to individual virtual machines. Any of the “jobs,” per se, are completely abstracted away by the system. The declarative policy engine funnels your RPO, RTO, Availability, and Replication requirements into system-level activities. This is where the true value of the system resides – the ability to control end-to-end ingest, placement, and archive for all protected pieces of data. Just set a policy and allow the system to do all of the heavy lifting. This is how the technology industry as a whole is going to tackle the ever-increasing demands for doing more with less, faster, and more efficiently.