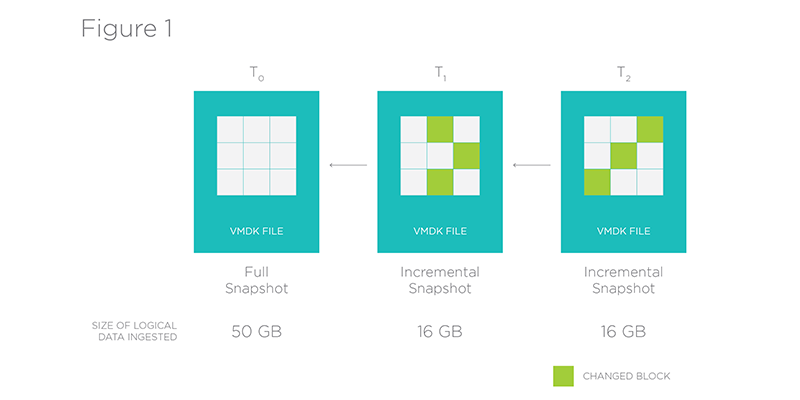

Rubrik is a time machine for virtualized infrastructure. It periodically takes snapshots of an enterprise’s virtual machines (VMs), allowing for instant recovery to a previous point in time. In order to efficiently store the entire history of a VM, it represents each snapshot as a delta, with each delta containing only the data which has changed since the previous snapshot.

Atlas is Rubrik’s Cloud-Scale File System at the foundation of the storage layer. It was designed specifically to support the time machine paradigm. Conceptually, Atlas stores a set of versioned files: each VM is a file, and each snapshot a version of that file. Internally, Atlas stores each snapshot as its own file, with the first being a full copy of the VM and each subsequent an incremental delta from the previous.

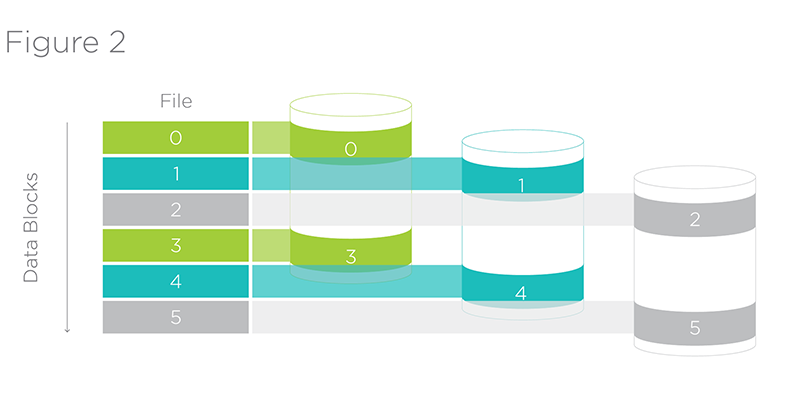

One of the reasons we chose to create Atlas was to have fine grain control of replica placement in order to co-locate related data. Almost all operations in our system involve operating on the content of a VM. In order to make these operations efficient, Atlas places entire file replicas on specific nodes, rather than spreading the data blocks backing the file randomly throughout the system. Put another way, for a file with three replicas, three nodes in our system will each contain the entirety of the data representing that file. This allows for us to run data management jobs for files on any of these three nodes with the data completely local.

Atlas also groups files logically when it comes to placement. For example, the chain of snapshots illustrated in Figure 1. For any VM, there will be a set of nodes which contains the entire history of that VM locally. As a second order optimization, Atlas co-locates replicas of VMs discovered to be similar, to allow for cross VM deduplication while keeping all data local. Because the replica placement logic is under our control, the replication and placement policy can be changed dynamically.

In summary, controlling the replica placement at the node, disk, and media level improves efficiency and performance for our specialized use case. It also allows for the flexibility to adapt to new use cases and unanticipated workloads, which would not be possible in an off the shelf solution.