At Rubrik, one of the “good problems” we have is massive hyper-growth across teams, which means we have to find easy, scalable ways to communicate to each other. This was put to the test with our launch of the Rubrik Polaris SaaS platform earlier this year, which led to the Sales Engineer team getting multiple “can I get access to the Rubrik Polaris demo account?” requests every week.

In order to create the new accounts, I simply log into Rubrik Polaris and select the Invite Users link, and then a welcome email that includes all relevant login information would be sent to the team member requesting access.

Even though the invite process was just a few clicks, as someone focused on all things DevOps and automation at Rubrik, a small part of me would shudder every time I had to manually repeat the invite process. That was until Chris Wahl decided to throw down the gauntlet.

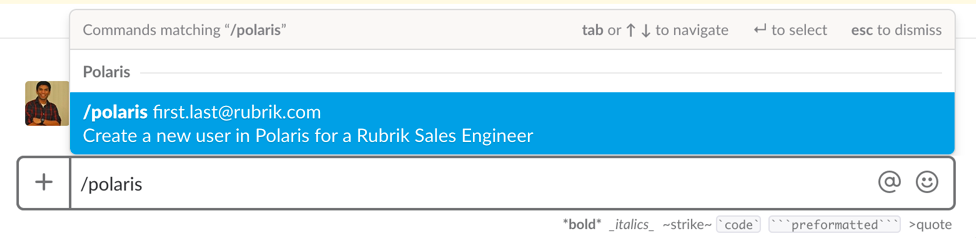

As a self-confessed Slack (our communication tool of choice) addict, I was immediately intrigued and got to work. I soon had a proof-of-concept Python script up and running that automated the new user invite process. But now what? How could I turn that basic script into a self-service utility that could be consumed across the entire Rubrik organization?

The first major architectural decision was where to run the code. I could deploy a server in vCenter or a new EC2 instance in AWS, but these options seemed both overkill for 150ish lines of code and a waste of resources. The script would also only be run periodically, so having an EC2 instance sitting in AWS that did nothing a vast majority of the time wouldn’t be cost effective either. On top of all that, I would also need to maintain those environments, which frankly I didn’t want to do.

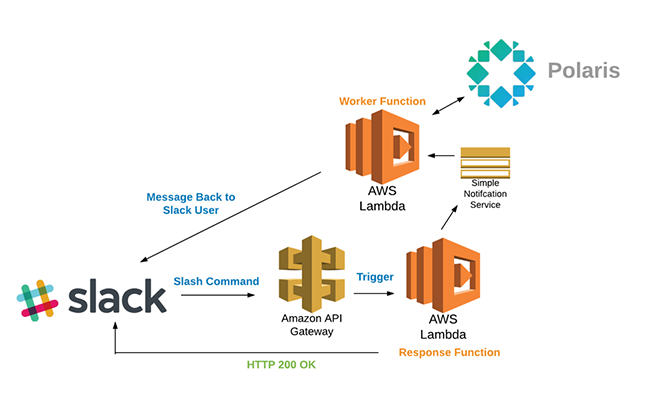

The clear choice was to use the AWS serverless compute service–Lambda. After the initial setup, Lambda would be hands off and only be executed when needed. Plus, the Lambda free tier includes 1 million free requests and 400,000 GB-seconds of compute time per month, so it was the most cost-effective option as well. After working through a few caveats, which I’ll review next, this is the final architecture that was deployed.

Once a user invokes the /polaris slash command, Slack sends the message–in this case the e-mail address used to create a new Rubrik Polaris account–to an Amazon API Gateway via HTTP POST.

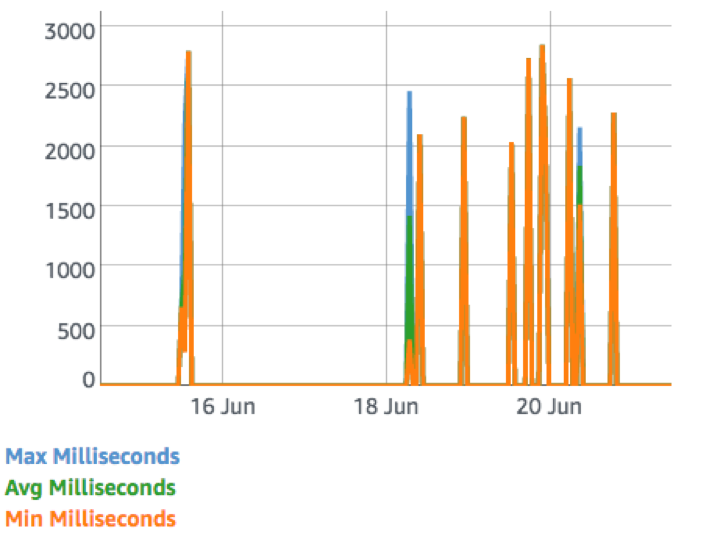

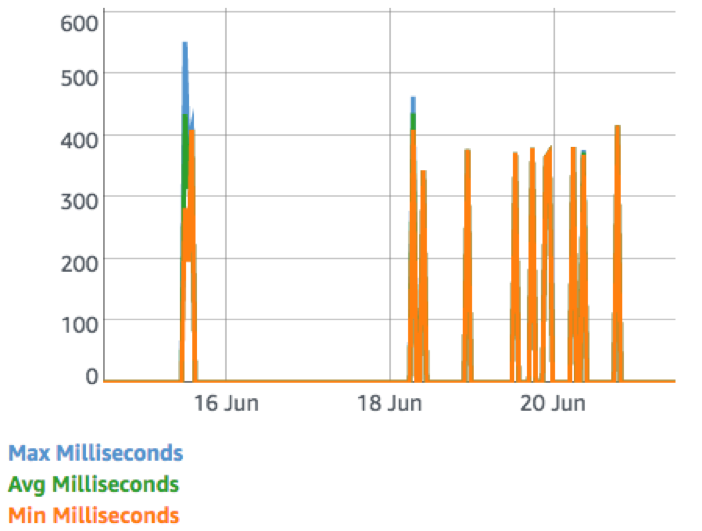

Slack then expects a response within 3,000 milliseconds or else it will return a timeout error. As you can see from the graph below, we are right on the edge of that time limit when executing the code that connects to Rubrik Polaris.

When you also factor in the time required to process and validate the initial Slack message, as well as any potential Lamba cold boot time, you could easily go over the 3,000 millisecond limit. To solve any potential timeout errors, I broke the Lambda function into two separate functions. The first Response Function’s main purpose is to send a HTTP 200 OK response to Slack as quickly as possible, which ends up being less than 600 milliseconds–well within the Slack limits.

After responding to Slack, the Response Function utilizes the Amazon Simple Notification Service (SNS) service to trigger and pass all relevant variables to the Worker Function, which does all of the heavy lifting and connects to Rubrik Polaris to create a new account. Once the Worker Function receives a response from Rubrik Polaris, it will send a message back to the user on Slack with either a success or a human readable error message.

Thanks to the multitude of services offered by AWS, it’s extremely easy and cost effective to create a new Slack command that automates the creation of new users in Rubrik Polaris. We have also open-sourced all of the Lambda Functions involved, which can be found in the Rubrik DevOps org on GitHub.

Want to learn more about the Rubrik Polaris SaaS platform? Read this blog by Rubrik Co-founder and CTO Arvind Nithrakashyap.