Rubrik Secure Vault

Ensure Cyber Recovery with Protection at the Point of Data

Secure your data against the evolving landscape of cyber threats. Harness air gapped, immutable, access-controlled backups to recover when you need to most.

Address modern threats head on

Attackers have gone after backups in 96% of attacks. And worse, they were at least partially successful in 74% of those attempts.* Today's organizations require cyber resilience, and that starts with securing data.

Rubrik Secure Vault secures your data by incorporating zero trust principles that assume breach while taking your protection even further by enabling cyber posture to proactively mitigate risks to your data.

*Rubrik Zero Labs: The State of Data Security - Measuring Your Data’s Risk:

https://www.rubrik.com/viewer?asset=rpt-zero-labs-4.pdf

Ensure Cyber Recovery

Secure your data from threats with air-gapped, immutable, and access controlled backups.

Simplify Protection at Scale

Enforce data security with automated discovery and policy-driven workflows from a single platform.

Enable Cyber Resilience

Secure your data while gaining visibility into your sensitive data and user access.

Maximum Cyber Resilience. Maximum Peace of Mind.

From the data center to the cloud, rest assured your data is safe with Rubrik. Rubrik offers a $10M ransomware recovery warranty* for Enterprise Edition and Enterprise Proactive Edition customer protecting their data on premises and in Rubrik managed cloud environments.

* Terms and conditions apply. Refer to warranty agreement for more information.

Keep data secure and available

Cybercriminals are no amateurs – they regularly target backups. 70% of organizations do not store backups offsite or their backups are NOT immutable.*

Rubrik Secure Vault offers immutability on first backup, intelligent data locks, retention locks, access controls, an air gap, and encryption to ensure your data's integrity and availability.

*AON 2023 Cyber Resilience Report:

https://www.aon.com/2023-cyber-resilience-report

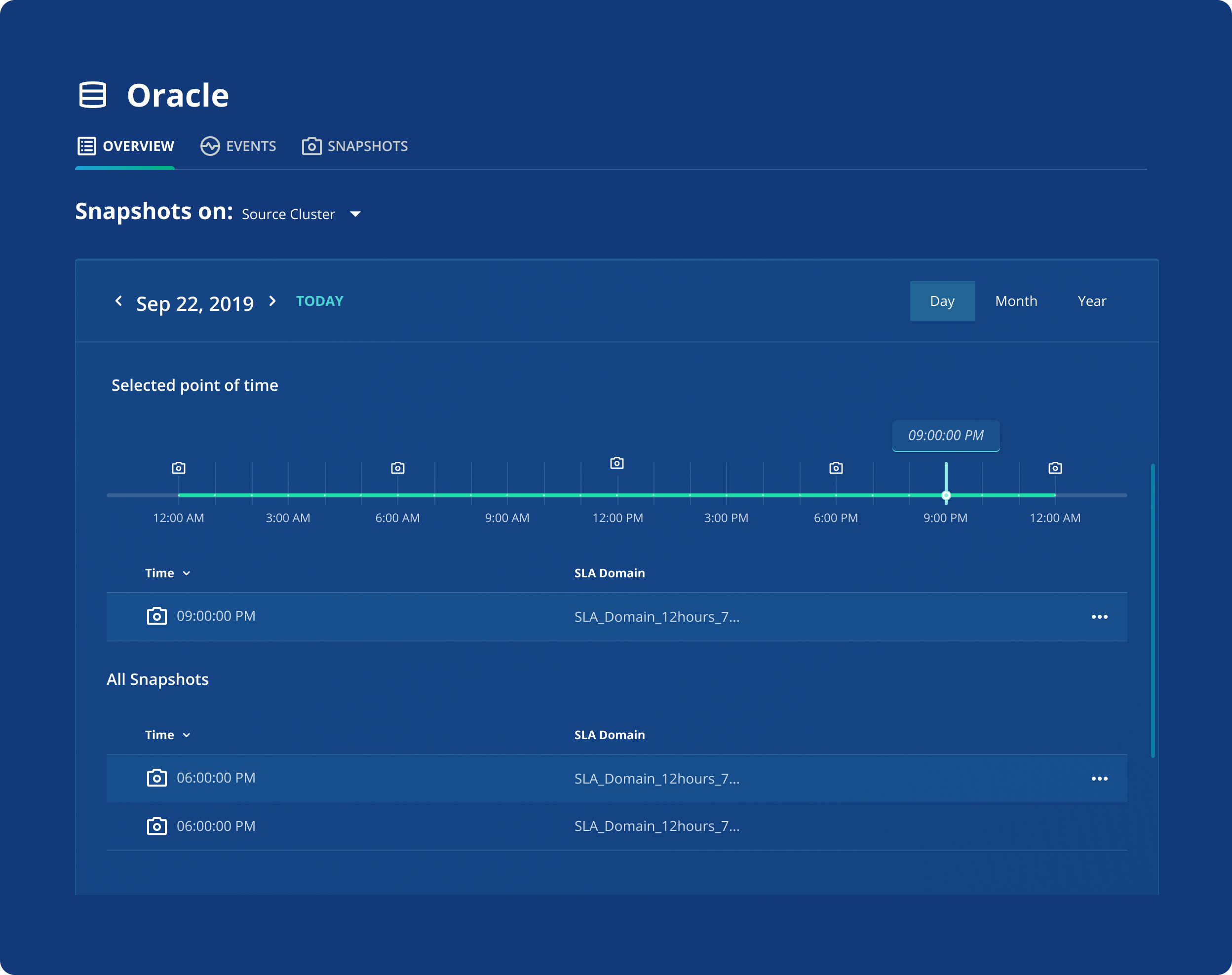

Stay protected with inherited policies

Almost 40% of organizations have not set compliance policies for their data backups.

Rubrik Secure Vault automatically discovers and dynamically secures all of your data, following policies you define that include everything from frequency and retention to archival and replication.

*Rubrik Zero Labs: The State of Data Security - Measuring Your Data’s Risk:

https://www.rubrik.com/viewer?asset=rpt-zero-labs-4.pdf

Efficiently backup and recover

66% of IT and security leaders report that data growth is outpacing our ability to keep it secure and mitigate risk.

Rubrik Secure Vault empowers you to reduce backup windows and access data in minutes by leveraging modern techniques like parallelization and incremental forever backups to help you keep up with that ever-expanding data universe.

*Rubrik Zero Labs: The State of Data Security - The Journey to Secure an Uncertain Future https://www.rubrik.com/company/newsroom/press-releases/23/rubrik-zero-labs-fall-2023

Enhance your clean room

No two ransomware attacks are the same, and neither are recovery options.

With Rubrik, you can recover what you need with guided workflows and easily identify clean recovery points to recover to an IRE, clean room, or back to your production environment.

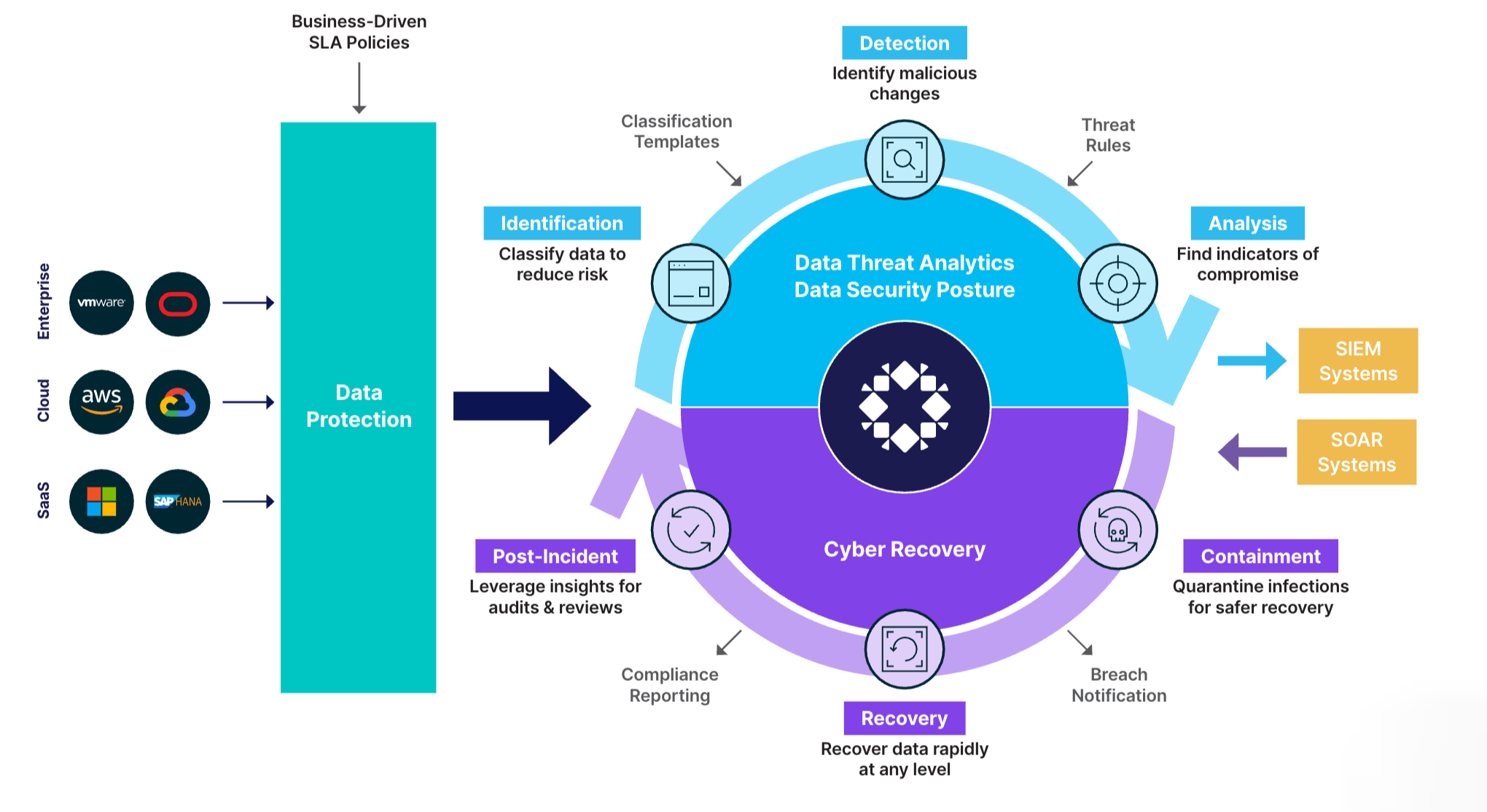

Enable cyber resilience

Cyber Recovery is just part of the equation. You also need Cyber Posture to achieve Cyber Resilience.

Rubrik Secure Vault captures time series data and metadata, enabling Rubrik Security Cloud to deliver data threat analytics and data security posture from a single platform.

The Trusted Data Security Solution for Cyber Recovery

Still relying on legacy backup systems? You're putting your data and your organization at risk. Here's why you need a data security approach instead.

How to Build Your Cyber Recovery Playbook

Get step-by-step help building your cyber recovery plan—from preparation and planning all the way through recovery and remediation.

Definitive Guide to Zero Trust Data Security

Cyber threats are growing at an alarming rate. Learn how to protect backup data and minimize the impact of ransomware attacks with Zero Trust Data Security.

Ready to get started?

Get a personalized demo of the Rubrik Zero Trust Data Security platform.